Tokens in OpenAI

tags :

Summary #

A single English word can take up anywhere between 1 to 3 tokens. URL

According to OpenAI, 1,000 tokens roughly corresponds to 750 words of text. However, this figure can fluctuate depending on the language and text complexity.

words = 3/2(tokens)

OCR of Images #

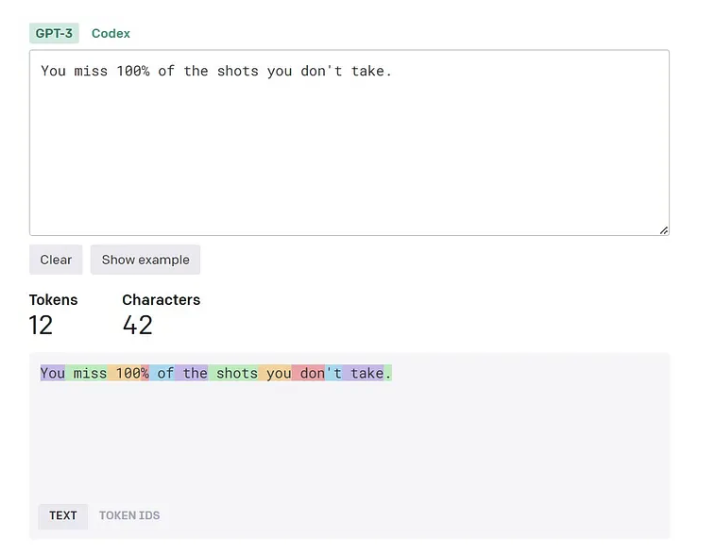

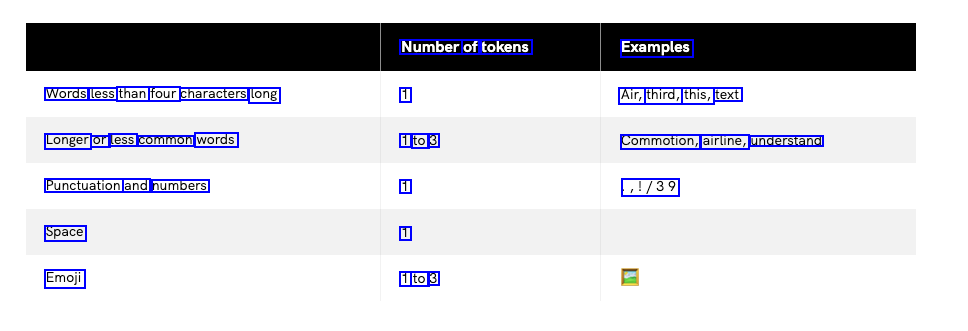

2024-05-16_10-35-18_screenshot.png #

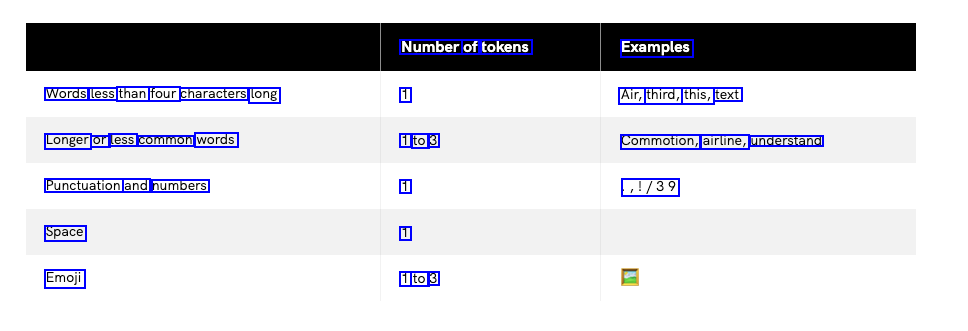

Number of tokens Examples Words less than four characters long 1 Air, third, this, text Longer or less common words 1 to 3 Commotion, airline, understand Punctuation and numbers 1 ,1/39 Space 1 Emoji 1 to 3

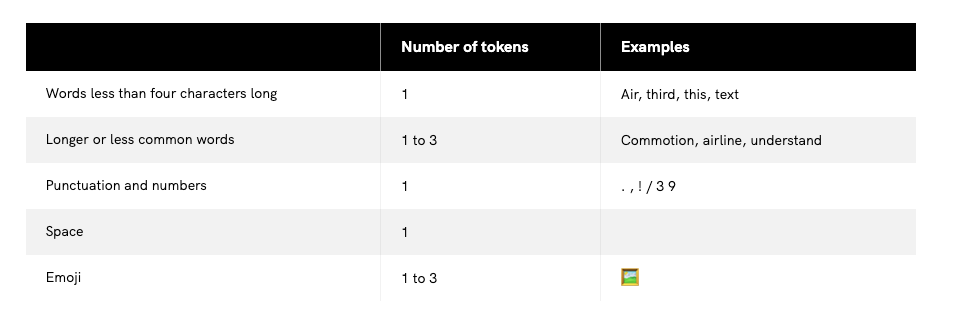

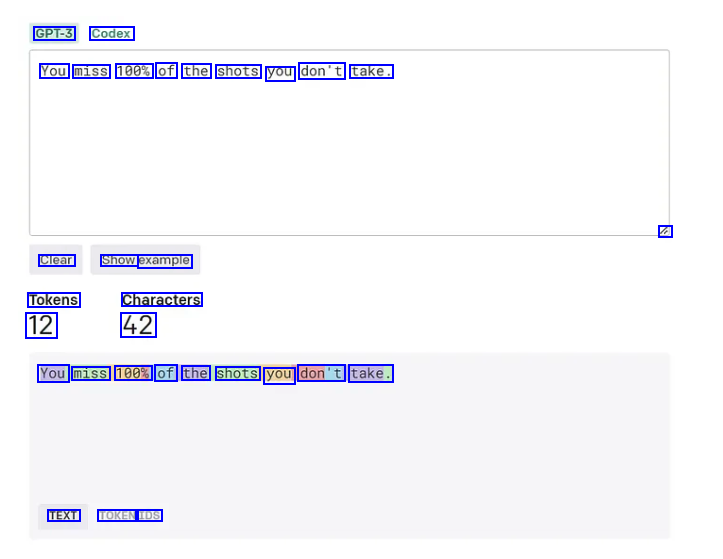

2024-05-16_10-37-01_screenshot.png #

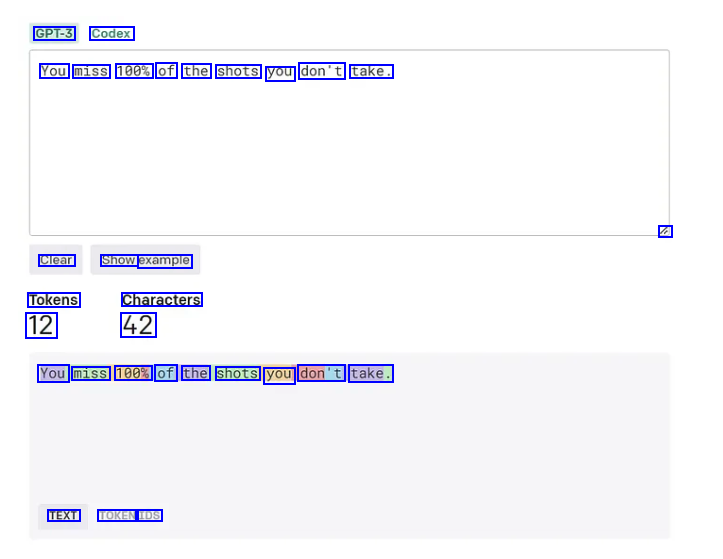

GPT-3 Codex You miss 100% of the shots you don't take. / Clear Show example Tokens 12 Characters 42 You miss 100% of the shots you don't take. TEXT TOKEN IDS

OCR of Images #

2024-05-16_10-35-18_screenshot.png #

Number of tokens Examples Words less than four characters long 1 Air, third, this, text Longer or less common words 1 to 3 Commotion, airline, understand Punctuation and numbers 1 ,1/39 Space 1 Emoji 1 to 3

2024-05-16_10-37-01_screenshot.png #

GPT-3 Codex You miss 100% of the shots you don't take. / Clear Show example Tokens 12 Characters 42 You miss 100% of the shots you don't take. TEXT TOKEN IDS