TF-IDF

tags :

vectorizer #

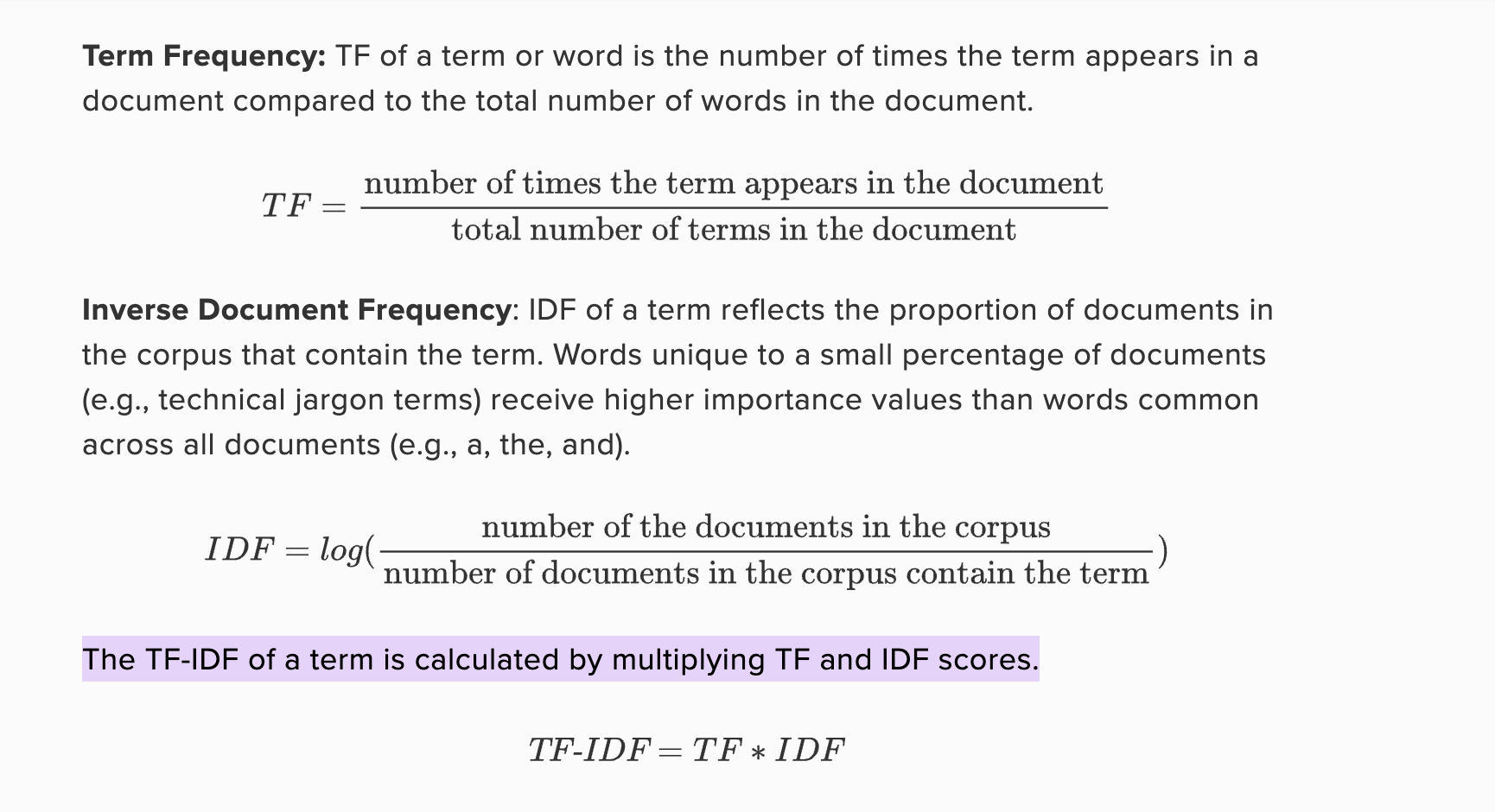

Term Frequency-Inverse Document Frequency (TF-IDF) is a method that takes into account not only the frequency of words in a document but also their importance across the entire corpus. It assigns higher values to words that are important in a specific document but not very common across all documents.

Use Cases #

- 83% of text recommender systems use TF-IDF

- Elasticsearch uses TF-IDF to provide search

- TF local

- IDF global

Vocabulary #

refs: http://blog.christianperone.com/2011/09/machine-learning-text-feature-extraction-tf-idf-part-i/ http://blog.christianperone.com/2011/10/machine-learning-text-feature-extraction-tf-idf-part-ii/ http://blog.christianperone.com/2013/09/machine-learning-cosine-similarity-for-vector-space-models-part-iii/ https://www.youtube.com/watch?v=4vT4fzjkGCQ https://www.youtube.com/watch?v=FrmrHyOSyhE https://github.com/scikit-learn/scikit-learn/blob/0.9.X/sklearn/feature_extraction/text.py

Text corpus is a large and structured set of texts (nowadays usually electronically stored and processed)

TF_IDF: (Term frequency - Inverse document frequency). ref #

In information retrieval, is a numerical statistic that is intended to reflect how important a word is to a document in a collection or corpus. JAK: In other words: how important a word is in a name or a sentence in a collection of names or sentences(corpus).

The tf–idf value increases proportionally to the number of times a word appears in the document(name) and is offset(decrease) by the number of documents(names) in the corpus that contain the word, which helps to adjust for the fact that some words appear more frequently in general. Tf–idf is one of the most popular term-weighting schemes today; 83% of text-based recommender systems in digital libraries use tf–idf.[2]

JAK: Importance(uniqueness) of a word in a in name increases with its frequency in the name and decreases with its occurrences in other names.

Vector Space Model(VSM): #

In sum, VSM is an algebraic model representing textual information as a vector, the components of this vector could represent the importance of a term (tf–idf)

Ref: http://blog.christianperone.com/2011/10/machine-learning-text-feature-extraction-tf-idf-part-ii/

The formula for the tf-idf is then:

and this formula has an important consequence: a high weight of the tf-idf calculation is reached when you have a high term frequency (tf) in the given document (local parameter) and a low document frequency of the term in the whole collection (global parameter).