Ray

Service #

- tags

- Open Source, Python, Python Apps

Effortlessly scale your most complex workloads

Ray is an open-source unified compute Framework that makes it easy to scale AI and Python workloads — from reinforcement learning to deep learning to tuning, and model serving. Learn more about Ray’s rich set of libraries and integrations.

- Ray is a unified

framework for scaling AI and Python applications. - Ray consists of a core distributed runtime and a toolkit of libraries (Ray AIR) for accelerating ML workloads.

Supports MLOps ecosystem.

Ray is a very powerful framework for ML orchestration

Use Cases #

How Ray solves common production challenges for generative AI infrastructure Ray Use Cases

Learning #

Ray: A Framework for Scaling and Distributing Python & ML Applications #

OCR of Images #

2023-08-23_22-40-37_screenshot.png #

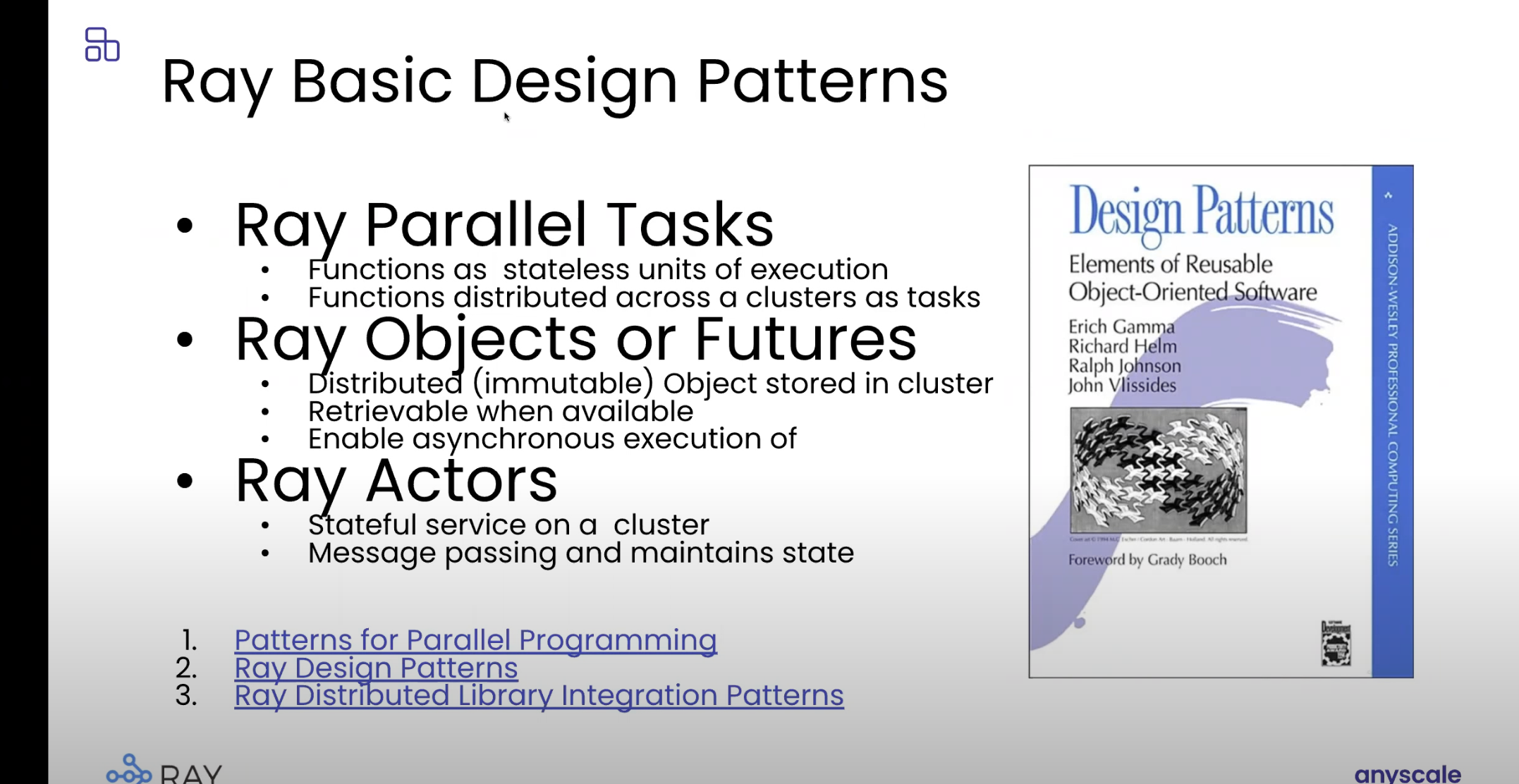

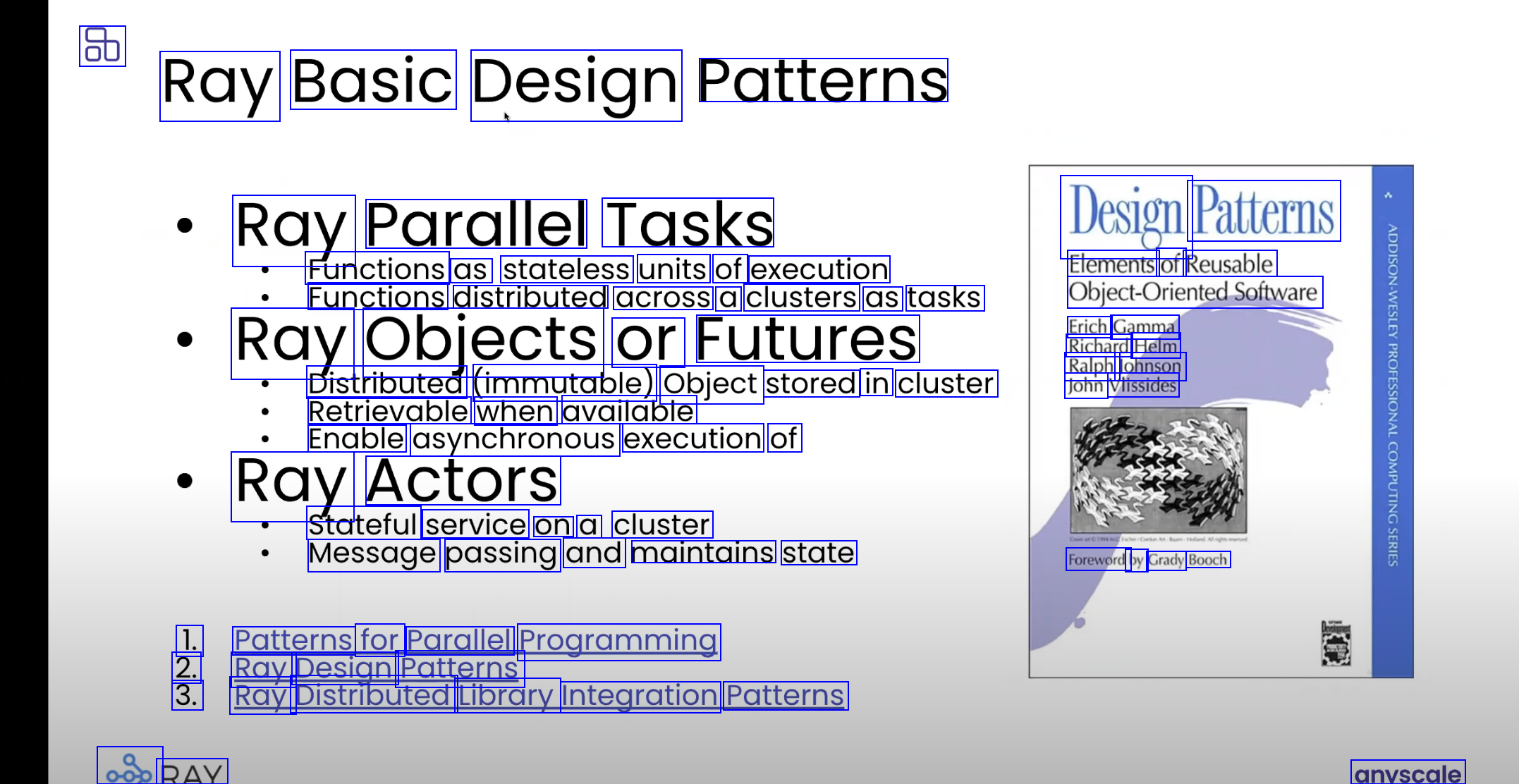

8b Ray Basic Design Patterns Ray Parallel Tasks Design Patterns Functions as stateless units of execution Functions distributed across a clusters as tasks Ray Objects or Futures Elements of Reusable Objec-Oriented.Sotware Erich Gamma Richard Helm Ralph Johnson John Vlissides Distributed (immutable) Object stored in cluster Retrievable when available Enable asynchronous execution of Ray Actors Stateful service on a cluster Message passing and maintains state Foreword by Grady Booch 1. Patterns for Parallel Programming 2. Ray Design Patterns 3. Ray Distributed Library Integration Patterns 080 RAY anyscale

2023-08-23_22-42-15_screenshot.png #

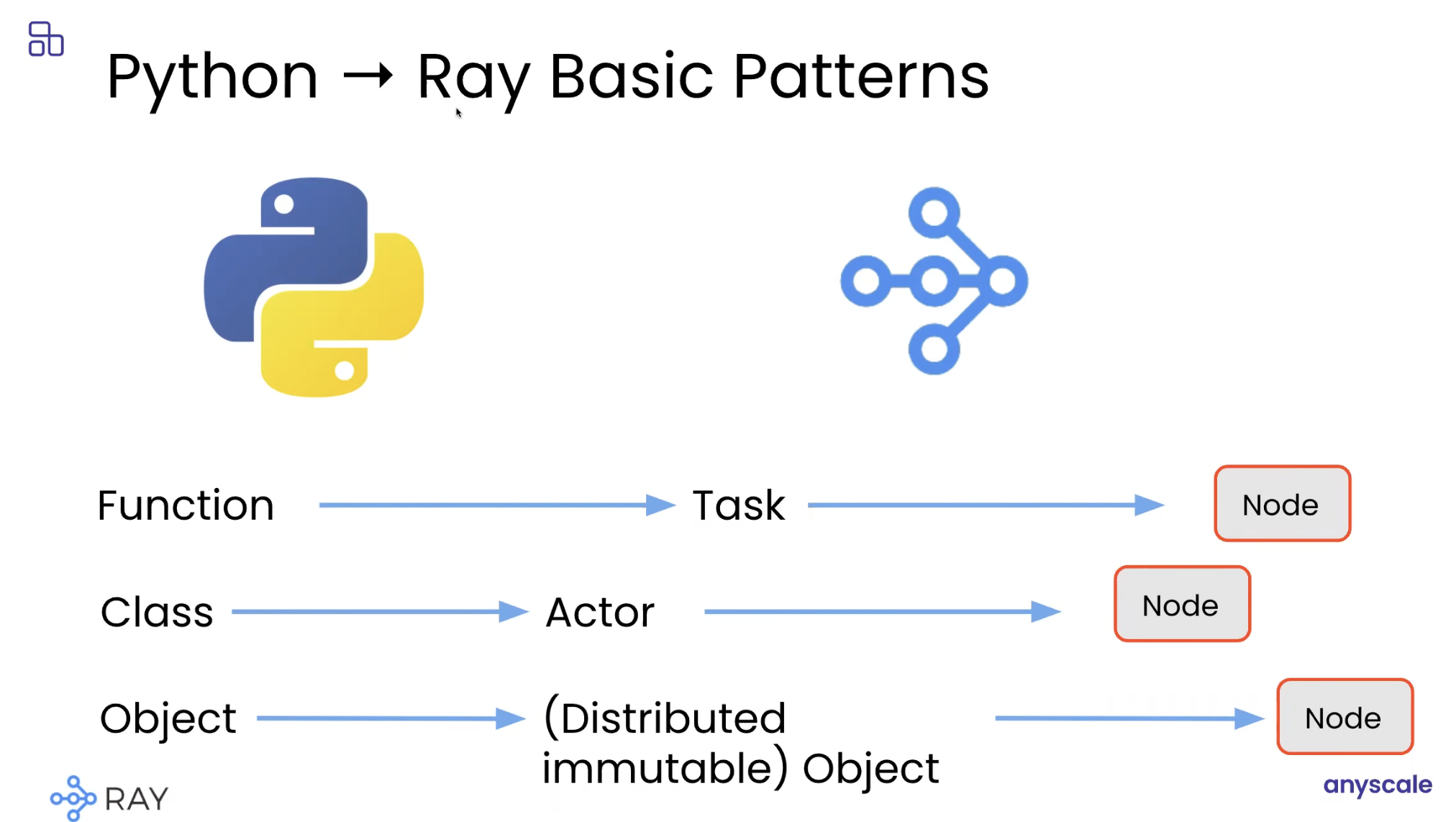

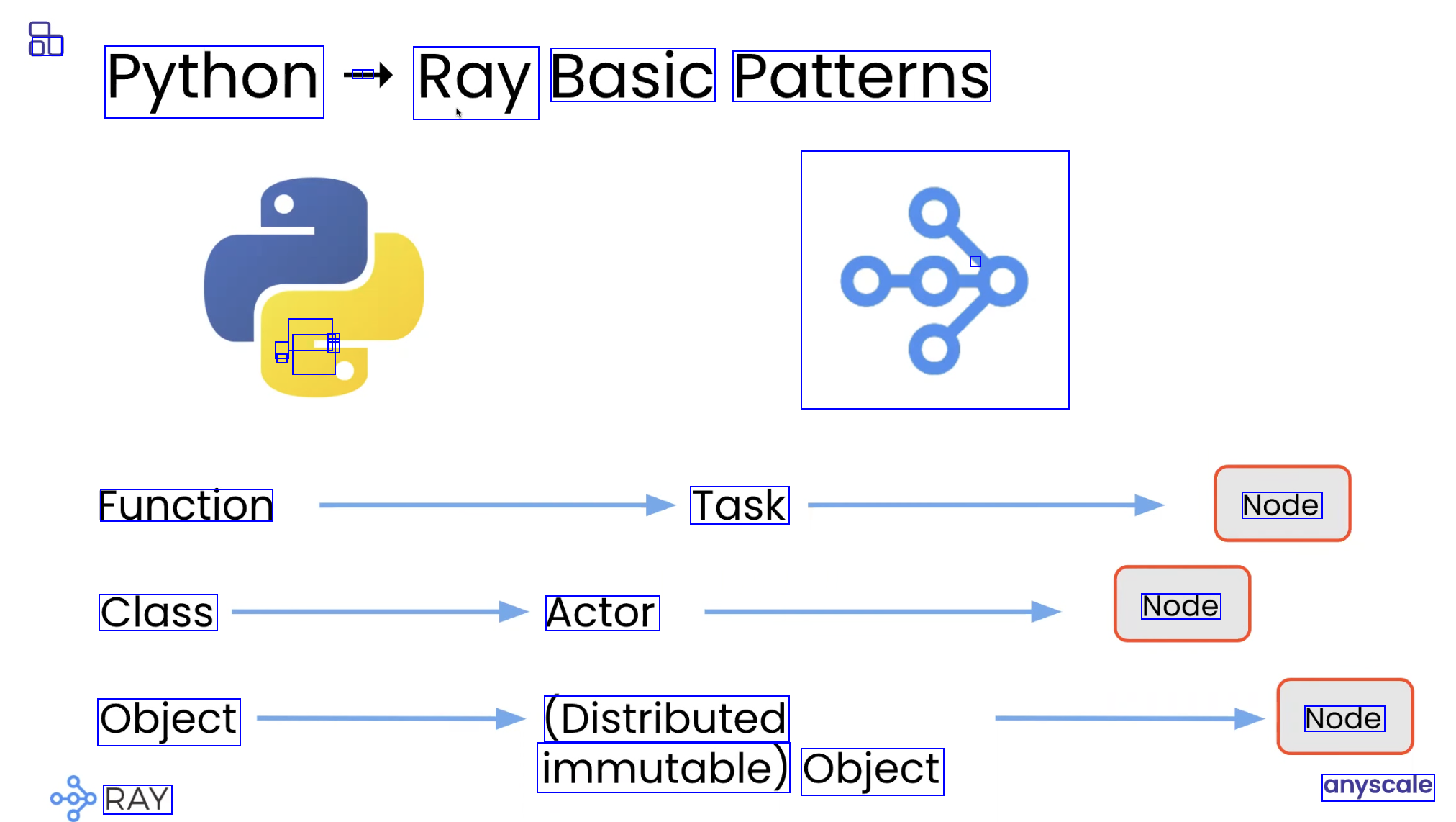

DU Python - - Ray Basic Patterns - - - - - - - 080 Function Task Node Class Actor Node Object (Distributed Node immutable) Object RAY anyscale

2023-08-23_22-42-36_screenshot.png #

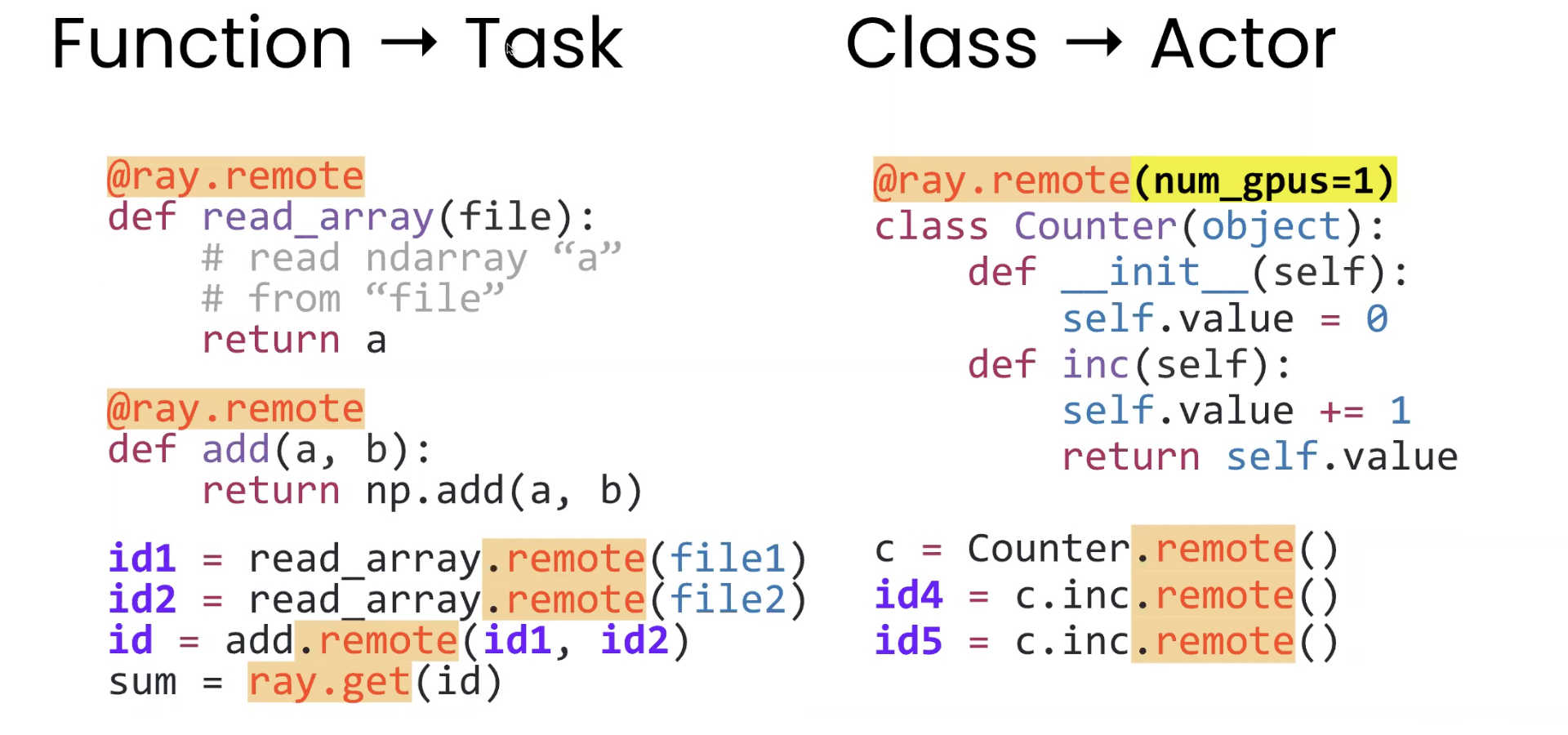

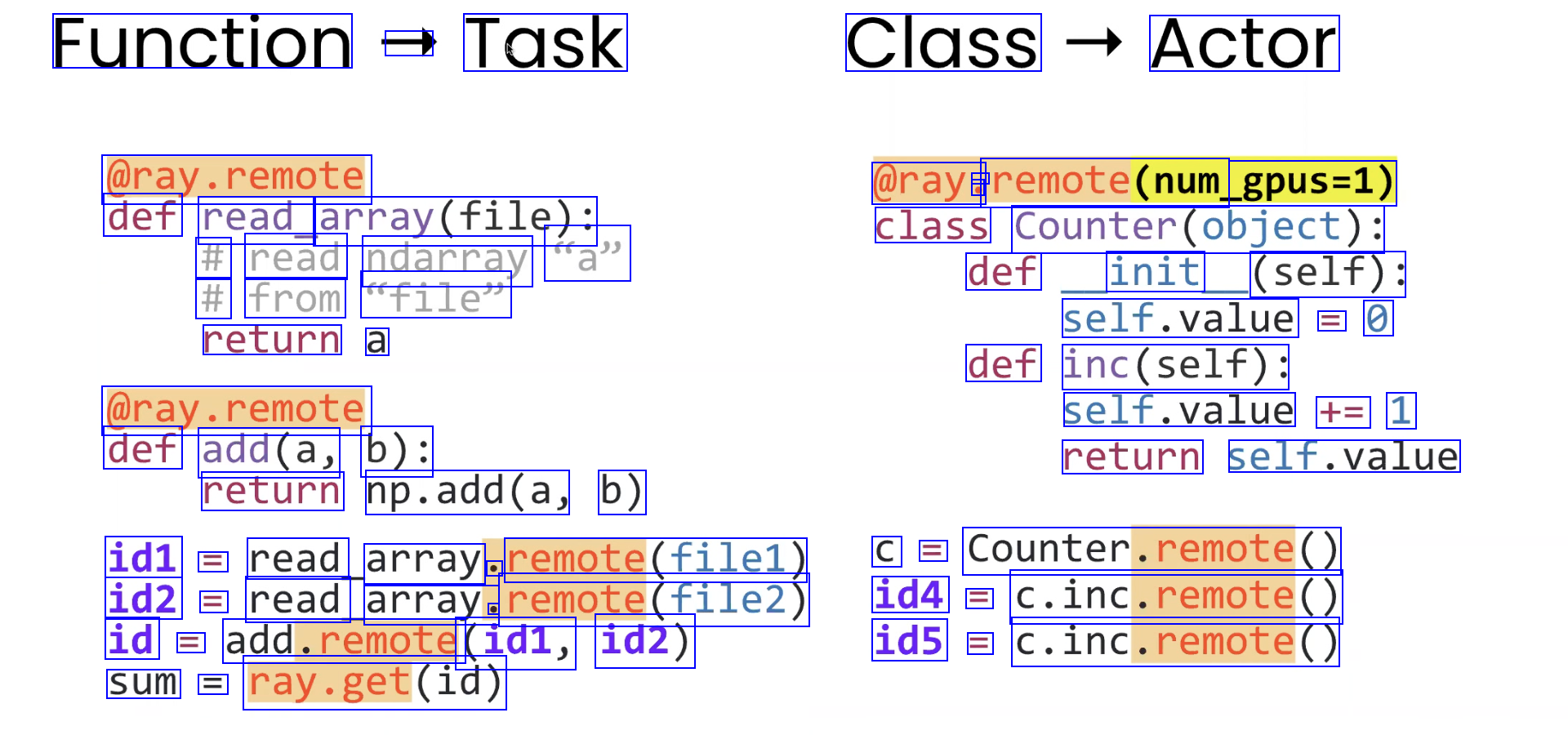

Function - Task Class Actor @ray.remote @ray. - o remote(num gpus=1) def read_ array(file): class Counter(object): # read ndarray a" # from ofile" def init (self): self.value == 0 return a def inc(self): @ray.remote def add(a, b): self.value += 1 return self.value return np.add(a, b) id1 == read array o remote(file1) id2 = read array. e remote(file2) id = add.remote (id1, id2) C = Counter.remote() id4 == C.inc.remote() id5 = C.inc.remote() sum =l ray.get(id)

2023-08-23_22-44-22_screenshot.png #

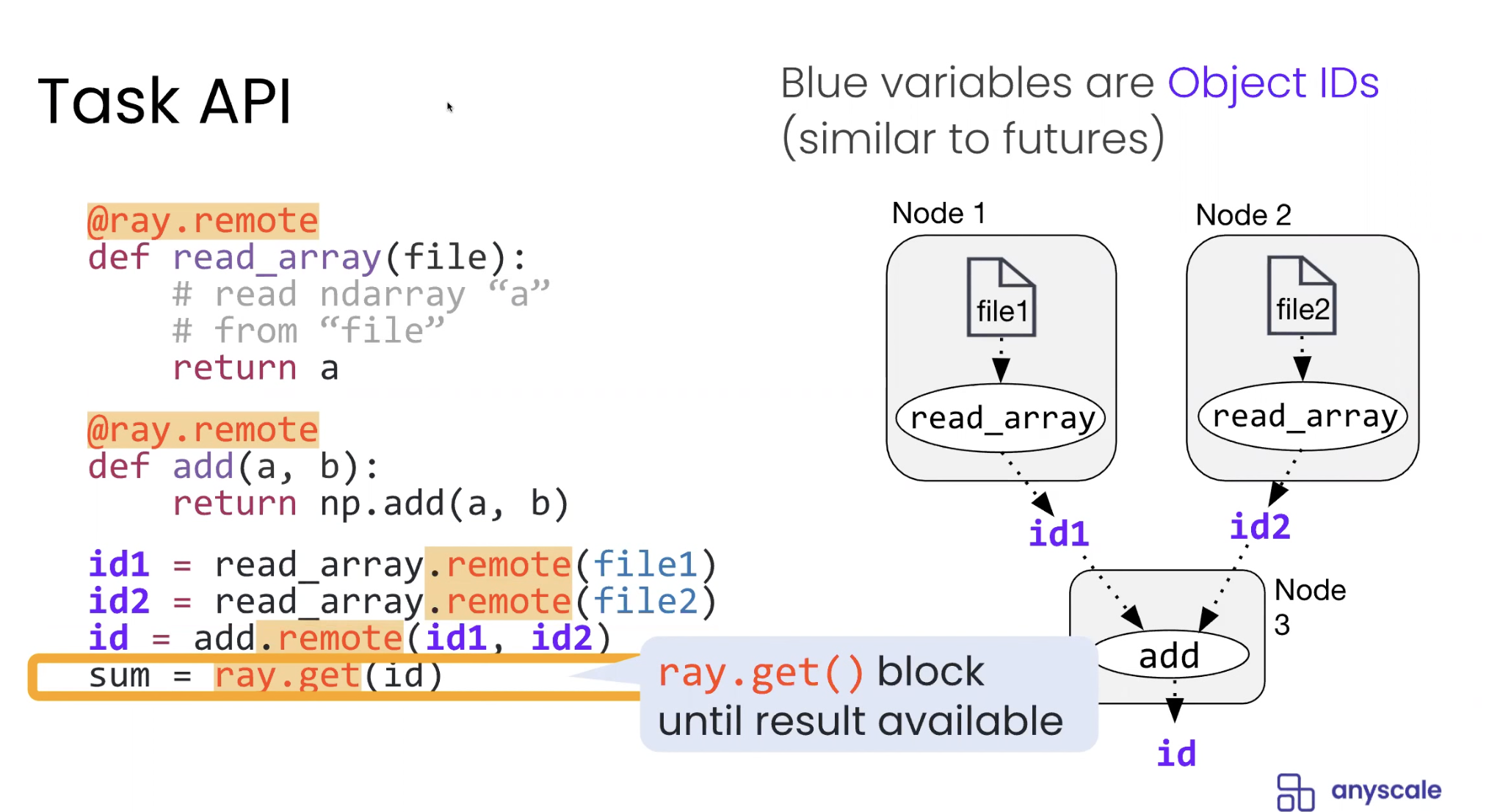

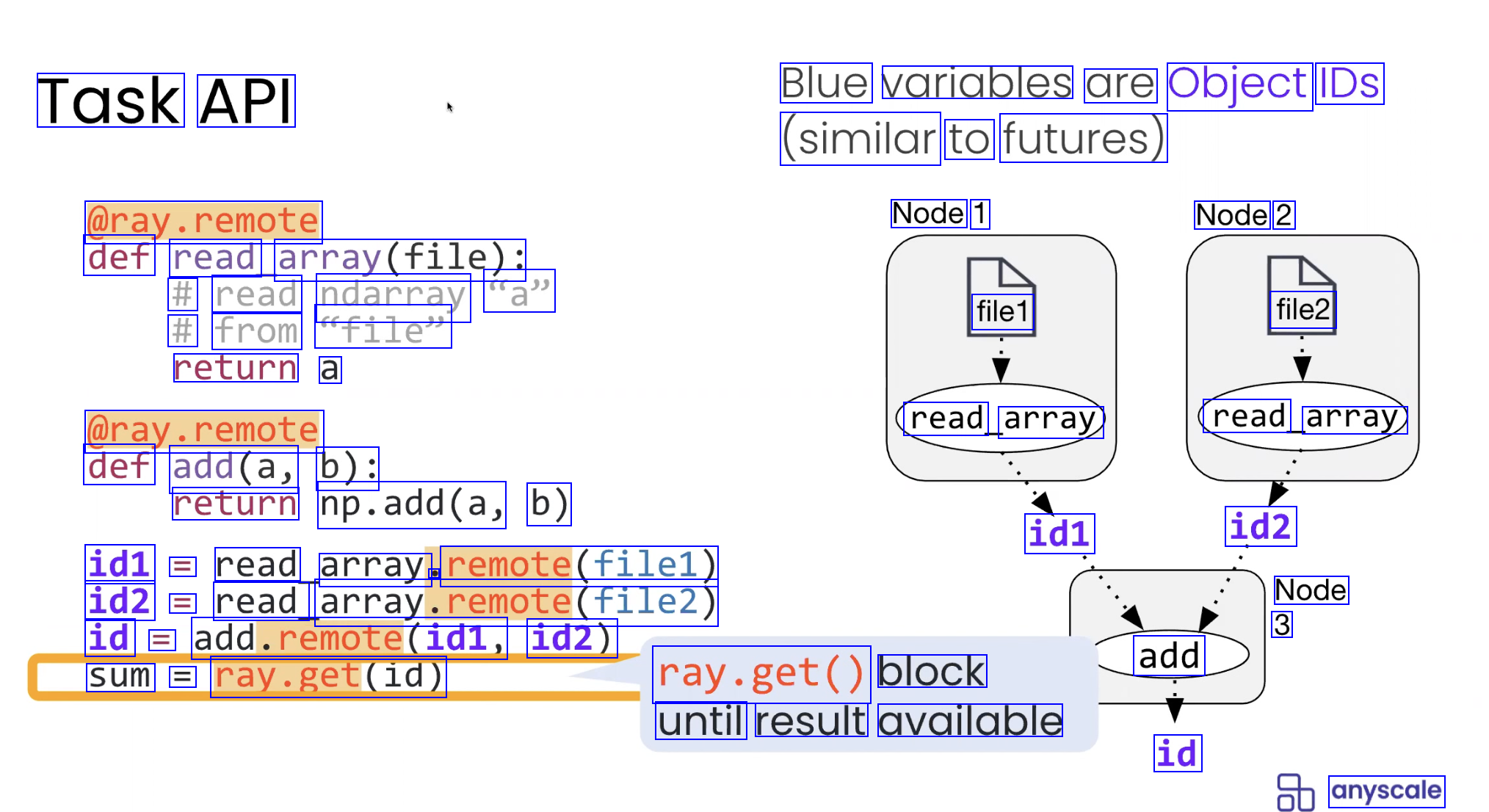

Task API Blue variables are Object IDS (similar to futures) @ray.remote Node 1 Node 2 def read array(file): # read ndarray "a" # from file" return a file1 file2 @ray.remote def add(a, b): read array read array) return np.add(a, b) id1 id2 id1 == read array remote(file1) id2 == read array.remote(file2) id == add.remote(id1, id2) Node 3 == sum add ray.get(id) ray.get() block until result available id anyscale

2023-08-23_22-44-40_screenshot.png #

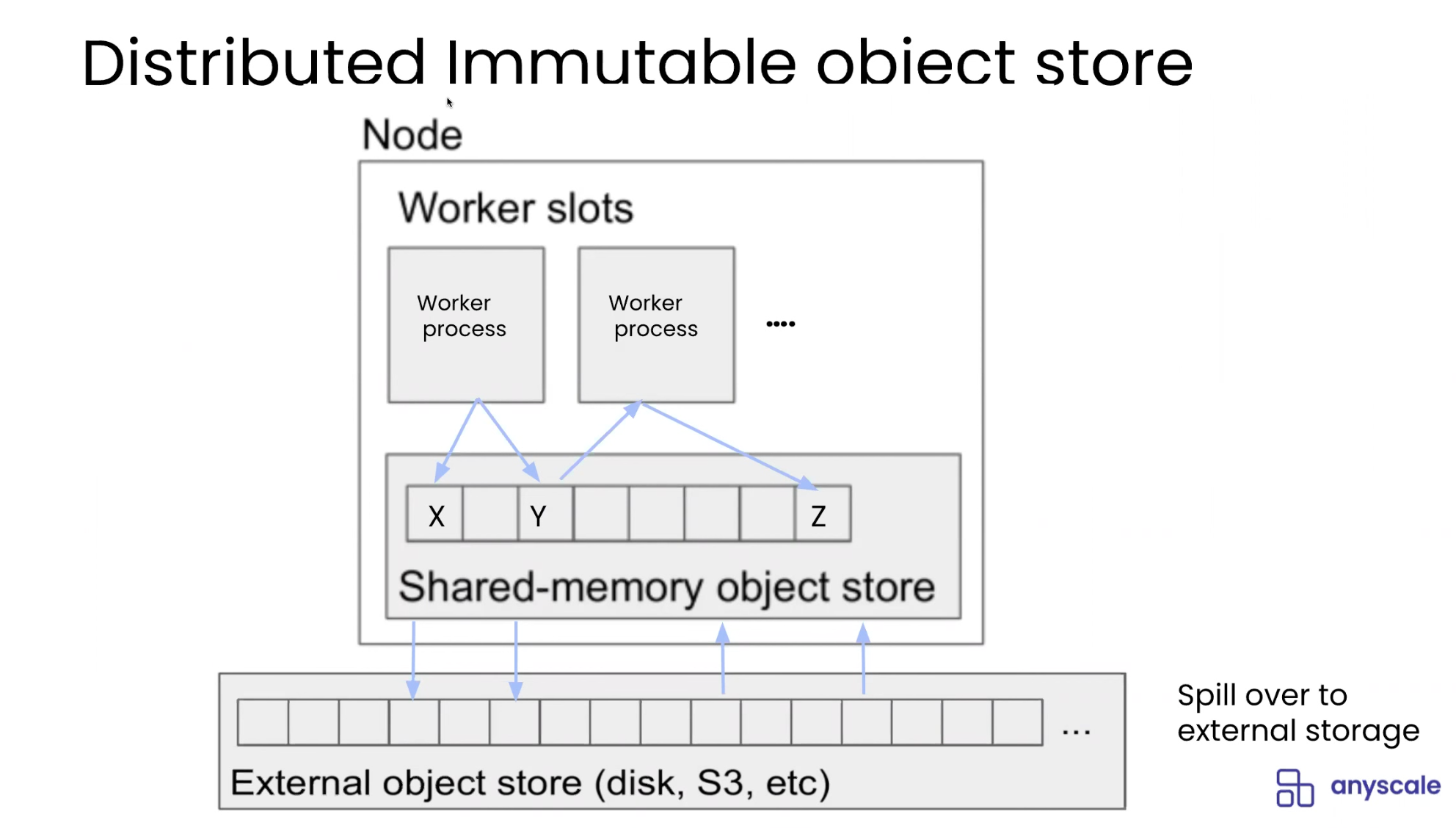

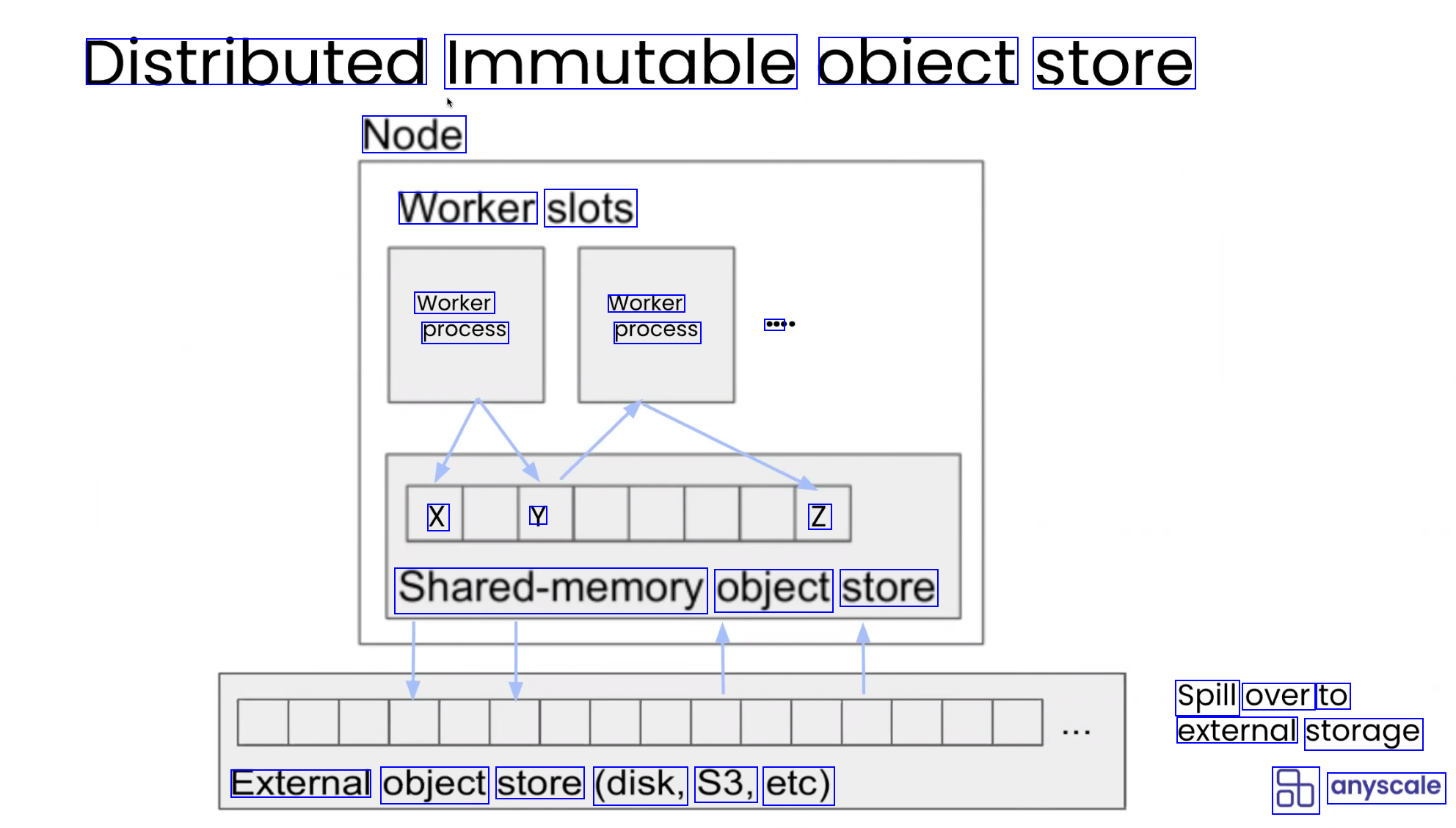

Distributed Immutable obiect store Node Worker slots Worker process Worker process ee X Y Z Shared-memory object store Spill over to external storage External object store (disk, S3, etc) 8b anyscale

2023-08-23_22-47-49_screenshot.png #

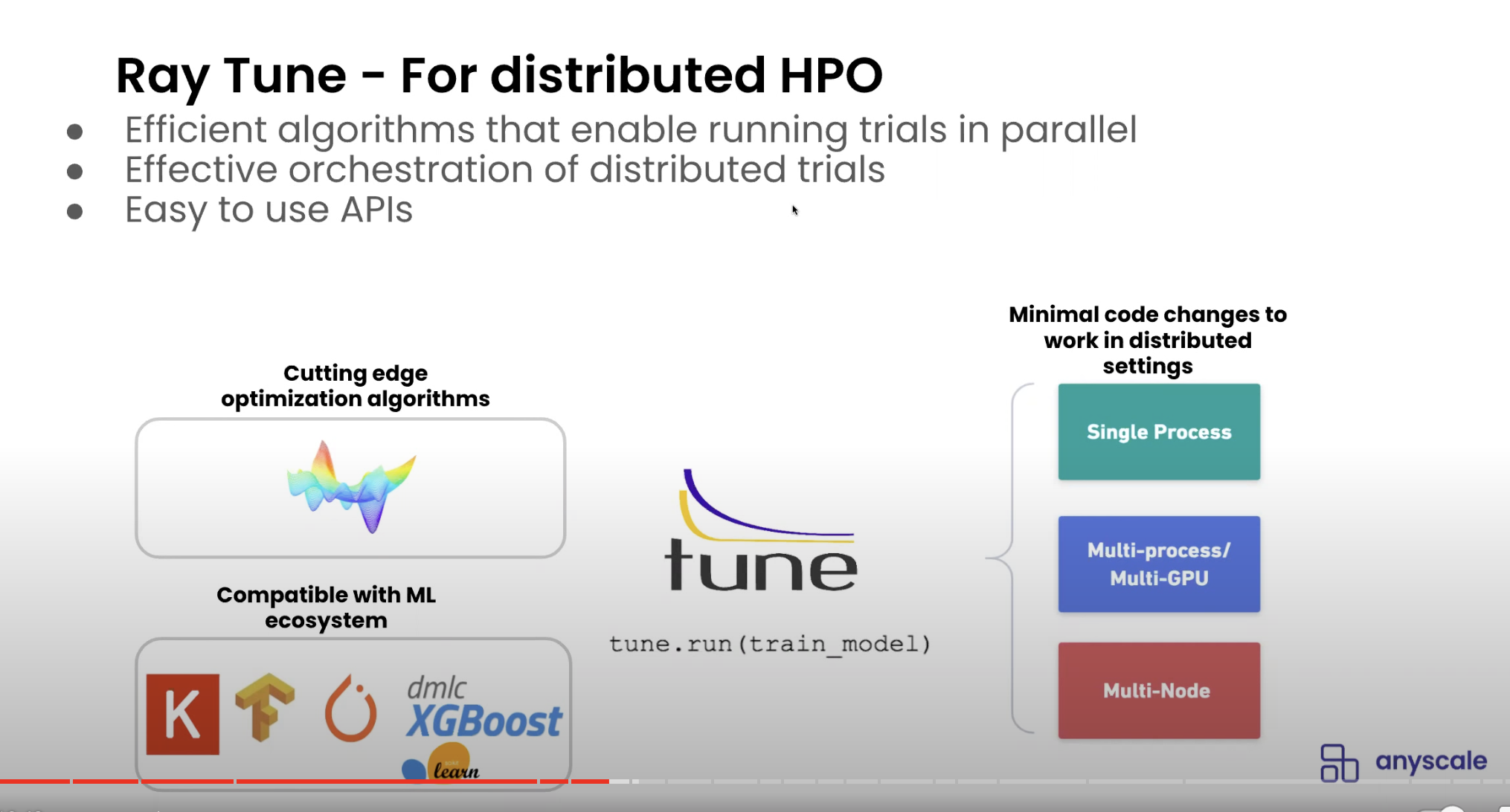

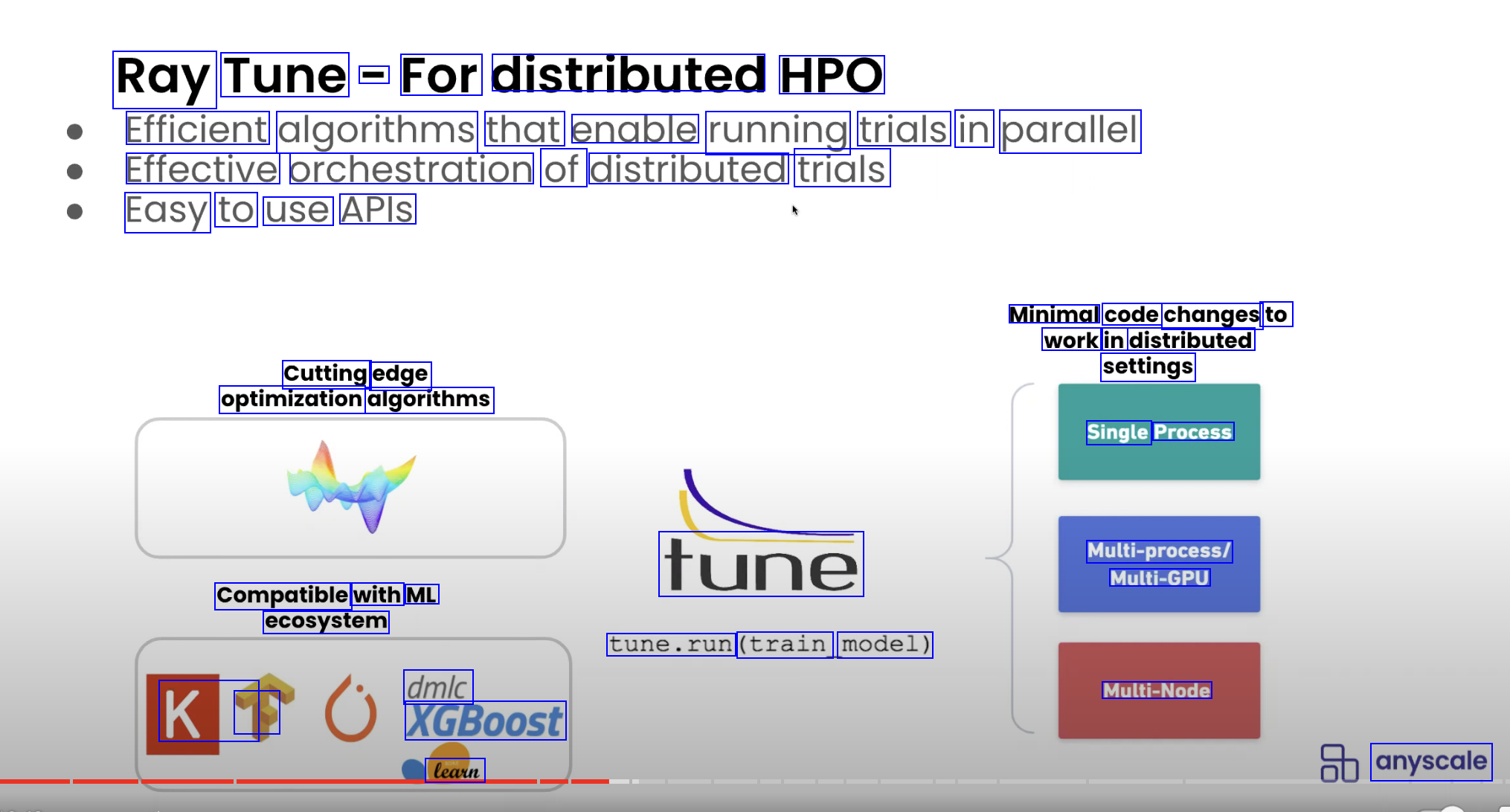

Ray Tune - For distributed HPO Efficient algorithms that enable running trials in parallel Effective orchestration of distributed trials Easy to use APIS Minimal code changes to work in distributed settings Cutting edge optimization algorithms Single Process Multi-process/ Multi-GPU tune Compatible with ML ecosystem tune.run (train model) dmlc XGBoost Multi-Node K1 1 learn anyscale

2023-08-23_22-48-38_screenshot.png #

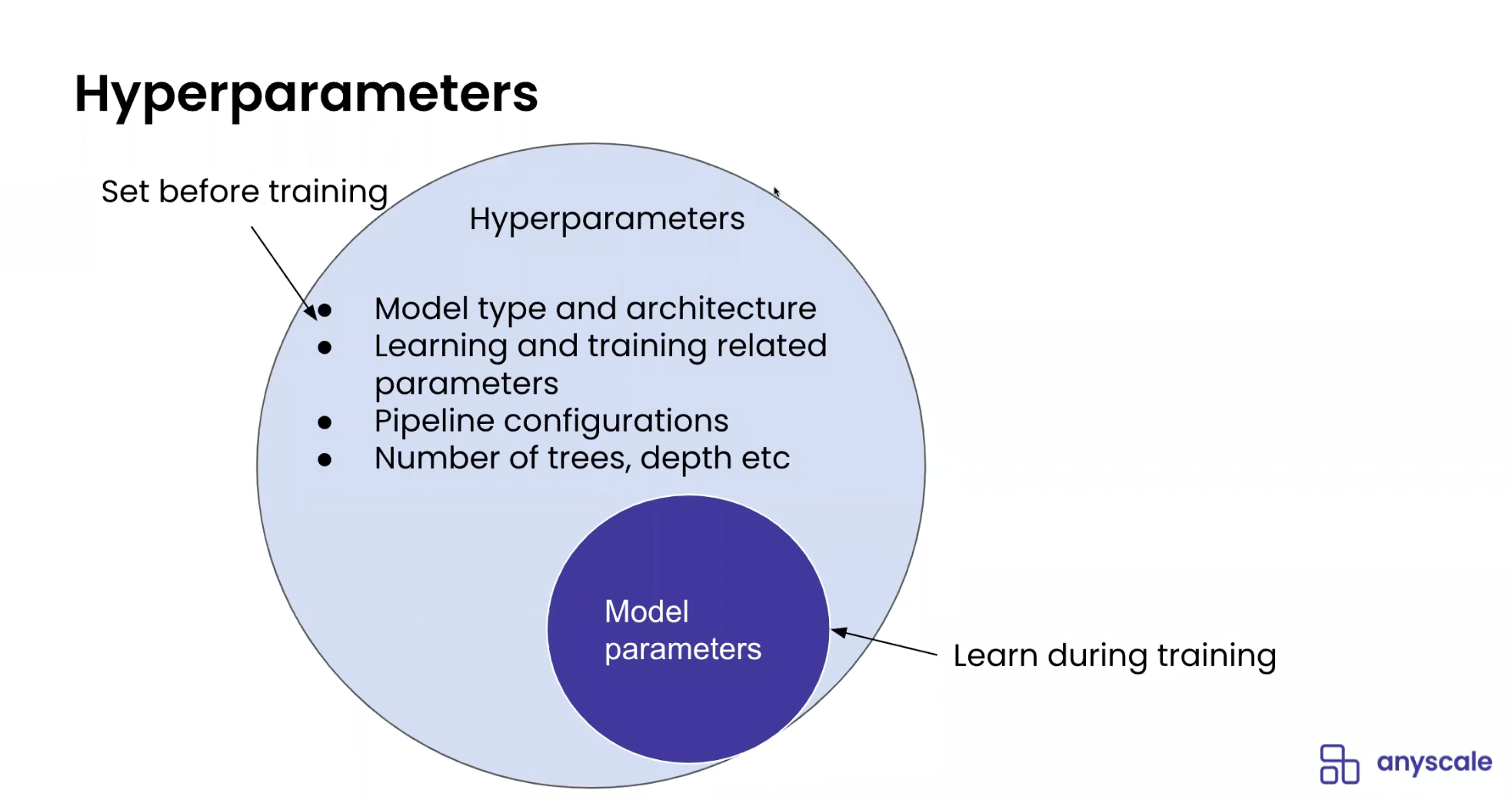

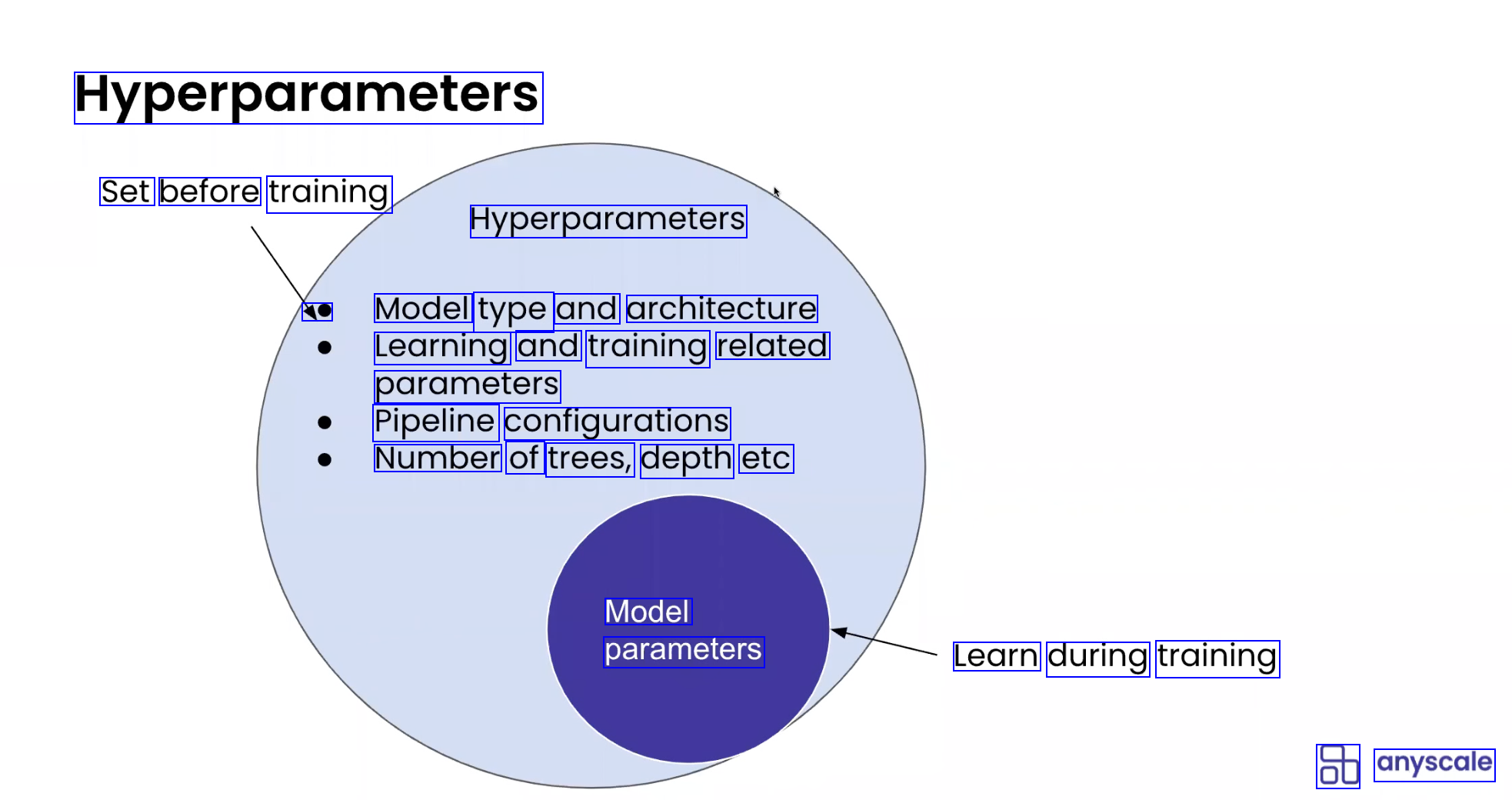

Hyperparameters Set before training Hyperparameters 40 Model type and architecture Learning and training related parameters Pipeline configurations Number of trees, depth etc Model parameters Learn during training 8b anyscale

2023-08-23_22-49-10_screenshot.png #

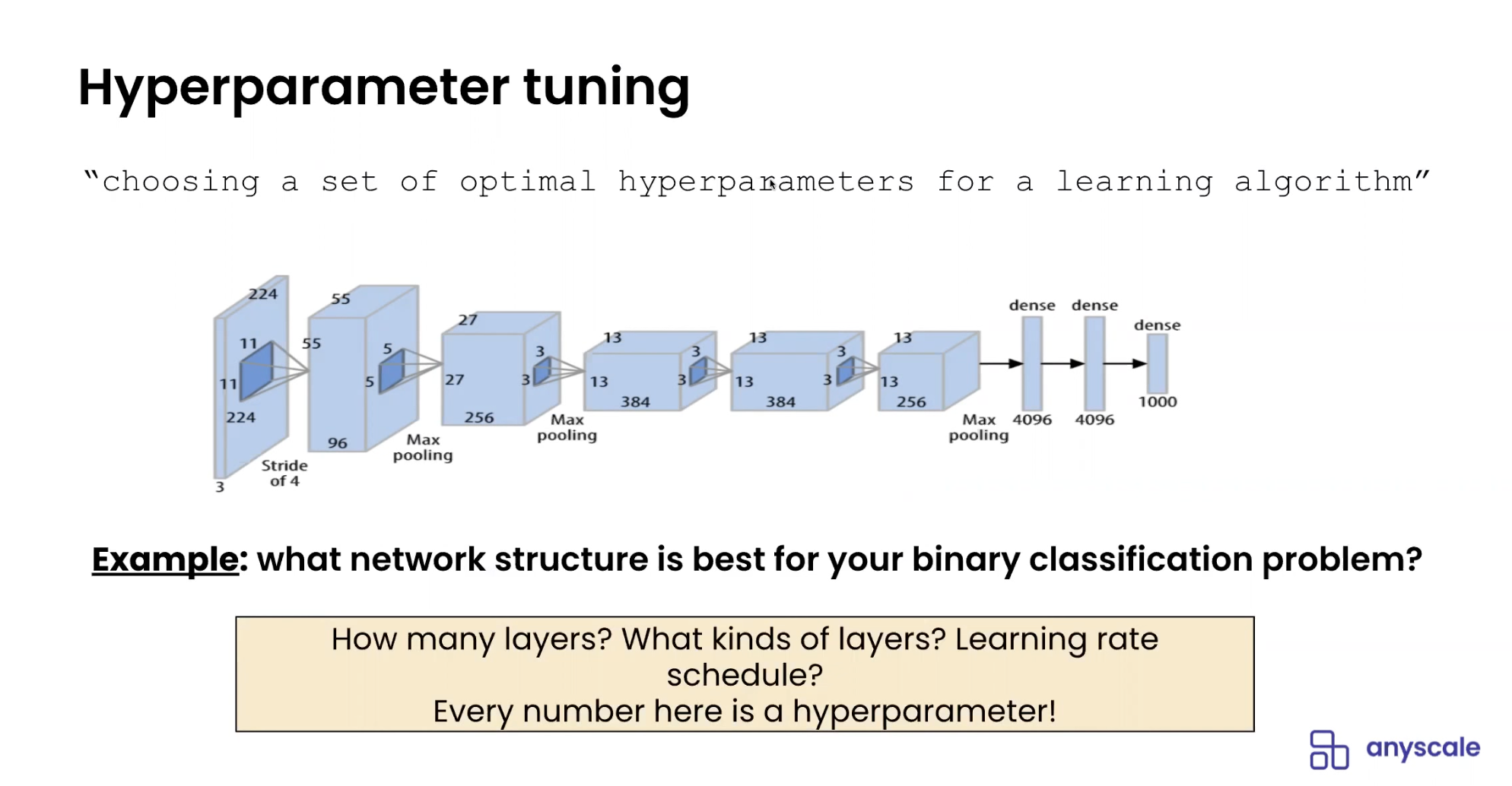

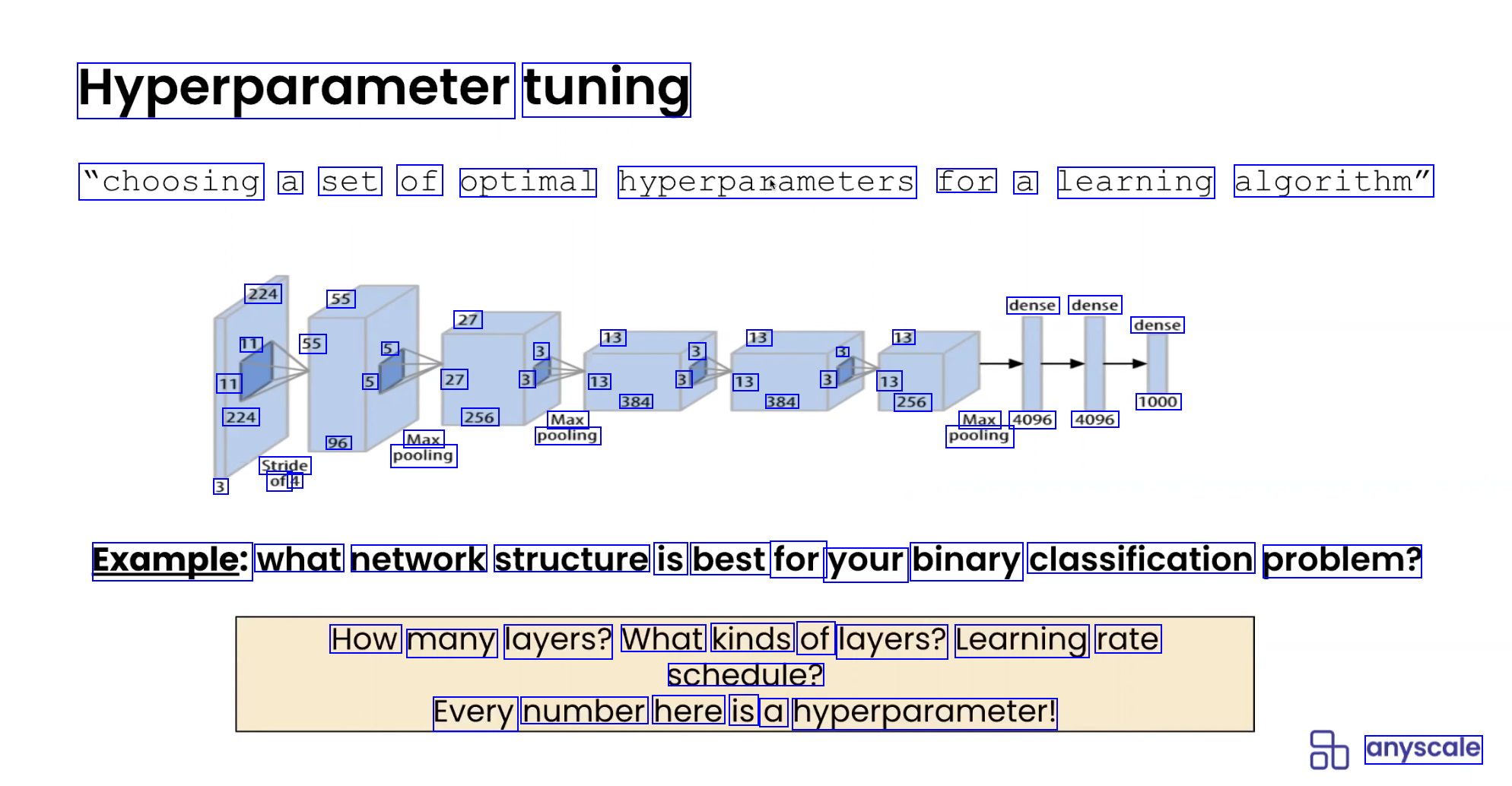

Hyperparameter tuning "choosing a set of optimal hyperpasameters for a learning algorithm" 224 55 11 55 11 224 dense dense 27 5 3 13 3 13 5 13 5 27 3 13 31 13 3 13 384 384 256 256 Max 96 Max pooling Stride pooling 3 of 4 dense 1000 Max 4096 4096 pooling Example: what network structure is best for your binary classification problem? How many layers? What kinds of layers? Learning rate schedule? Every number here is a hyperparameter: anyscale