opensearch-neural-search

tags :

OpenSearch Neural Search Plugin #

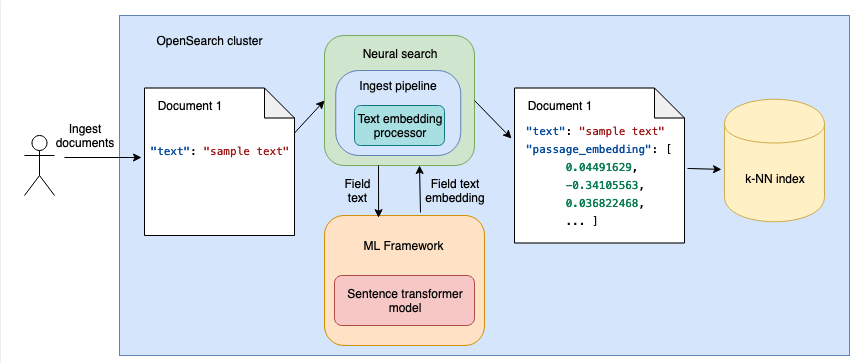

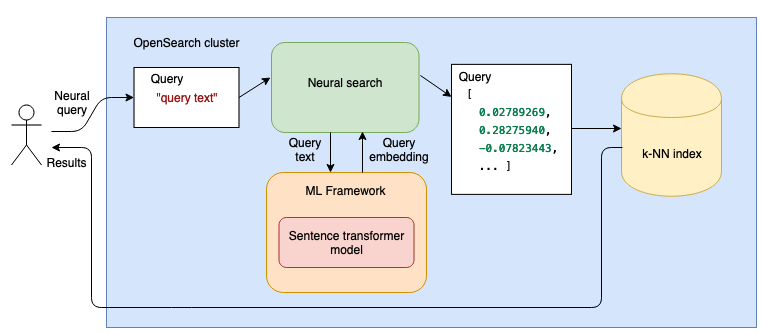

The OpenSearch Neural Search plugin enables the integration of machine learning (ML) language models(LLM) into your search workloads. During ingestion and search, the Neural Search plugin transforms text into vectors. Then, Neural Search uses the transformed vectors in vector-based search.

- Neural search transforms text into vectors and facilitates vector search both at ingestion time and at search time.

- Introduced in 2.9, ref

Using Embedding models #

Pretrained by OpenSearch #

OpenSearch provides a variety of open-source pretrained models that can assist with a range of machine learning (ML) search and analytics use cases. You can upload any supported model to the OpenSearch cluster and use it locally.

OpenSearch supports the following models, categorized by type. Text embedding models are sourced from Hugging Face.

Sparse Encoding #

We(opensearch) recommend the following combinations for optimal performance:

Use the amazon/neural-sparse/opensearch-neural-sparse-encoding-v2-distill model during both ingestion and search. Use the amazon/neural-sparse/opensearch-neural-sparse-encoding-doc-v2-distill model during ingestion and the amazon/neural-sparse/opensearch-neural-sparse-tokenizer-v1 tokenizer during search. opensearch, ref

cross encoders #

cross-encoder models support query reranking.

the following table provides a list of cross-encoder models and artifact links you can use to download them. note that you must prefix the model name with huggingface/cross-encoders, as shown in the model name column.

model name version torchscript artifact onnx artifact huggingface/cross-encoders/ms-marco-minilm-l-6-v2 1.0.2 - model_url

- config_url - model_url

- config_url

huggingface/cross-encoders/ms-marco-minilm-l-12-v2 1.0.2 - model_url

config_url - model_url

config_url

POST _plugins/_ml/models/<model_id>/_predict "query_text": "today is sunny", "text_docs": [ "how are you", "today is sunny", "today is july fifth", "it is winter" ]The model calculates the similarity score of query_text and each document in text_docs and returns a list of scores for each document in the order they were provided in text_docs:

Result

"inference_results": [

"output": [

"name": "similarity",

"data_type": "FLOAT32",

"shape": [

1

],

"data": [

-6.077798

],

"byte_buffer":

"array": "Un3CwA==",

"order": "LITTLE_ENDIAN"

]

,

"output": [

"name": "similarity",

"data_type": "FLOAT32",

"shape": [

1

],

"data": [

10.223609

],

"byte_buffer":

"array": "55MjQQ==",

"order": "LITTLE_ENDIAN"

]

,

"output": [

"name": "similarity",

"data_type": "FLOAT32",

"shape": [

1

],

"data": [

-1.3987057

],

"byte_buffer":

"array": "ygizvw==",

"order": "LITTLE_ENDIAN"

]

,

"output": [

"name": "similarity",

"data_type": "FLOAT32",

"shape": [

1

],

"data": [

-4.5923924

],

"byte_buffer":

"array": "4fSSwA==",

"order": "LITTLE_ENDIAN"

]

]

Custom local models #

ref To use a custom model locally, you can upload it to the OpenSearch cluster.

To upload a custom model to OpenSearch, you need to prepare it outside of your OpenSearch cluster. You can use a pretrained model, like one from Hugging Face, or train a new model in accordance with your needs.

With URL to download the model to the opensearch

POST /_plugins/_ml/models/_register

"name": "huggingface/sentence-transformers/msmarco-distilbert-base-tas-b",

"version": "1.0.1",

"model_group_id": "wlcnb4kBJ1eYAeTMHlV6",

"description": "This is a port of the DistilBert TAS-B Model to sentence-transformers model: It maps sentences & paragraphs to a 768 dimensional dense vector space and is optimized for the task of semantic search.",

"function_name": "TEXT_EMBEDDING",

"model_format": "TORCH_SCRIPT",

"model_content_size_in_bytes": 266352827,

"model_content_hash_value": "acdc81b652b83121f914c5912ae27c0fca8fabf270e6f191ace6979a19830413",

"model_config":

"model_type": "distilbert",

"embedding_dimension": 768,

"framework_type": "sentence_transformers",

"all_config": """"_name_or_path":"old_models/msmarco-distilbert-base-tas-b/0_Transformer","activation":"gelu","architectures":["DistilBertModel"],"attention_dropout":0.1,"dim":768,"dropout":0.1,"hidden_dim":3072,"initializer_range":0.02,"max_position_embeddings":512,"model_type":"distilbert","n_heads":12,"n_layers":6,"pad_token_id":0,"qa_dropout":0.1,"seq_classif_dropout":0.2,"sinusoidal_pos_embds":false,"tie_weights_":true,"transformers_version":"4.7.0","vocab_size":30522"""

,

"created_time": 1676073973126,

"url": "https://artifacts.opensearch.org/models/ml-models/huggingface/sentence-transformers/msmarco-distilbert-base-tas-b/1.0.1/torch_script/sentence-transformers_msmarco-distilbert-base-tas-b-1.0.1-torch_script.zip"

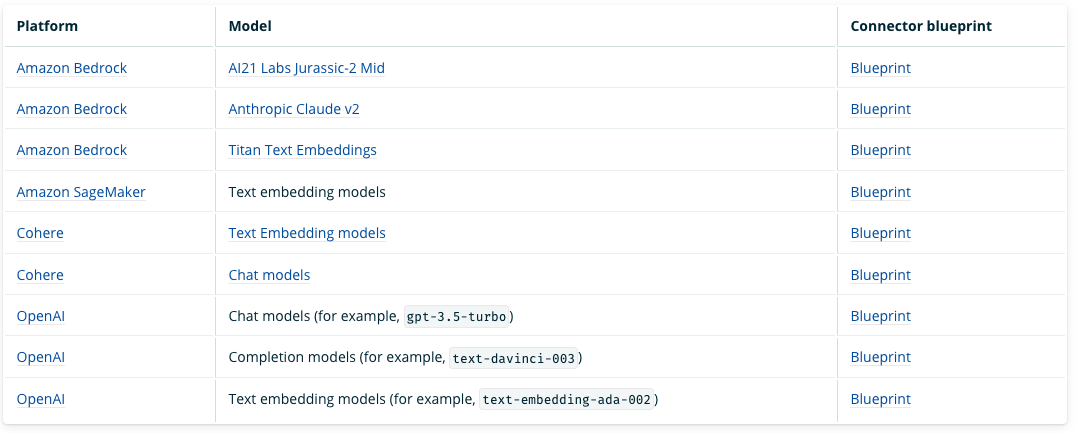

Connecting to externally hosted models #

Integrations with machine learning (ML) models hosted on third-party platforms allow system administrators and data scientists to run ML workloads outside of their OpenSearch cluster. Connecting to externally hosted models enables ML developers to create integrations with other ML services, such as Amazon SageMaker or OpenAI.

Ingestion and Query #

Ingestion #

Query #

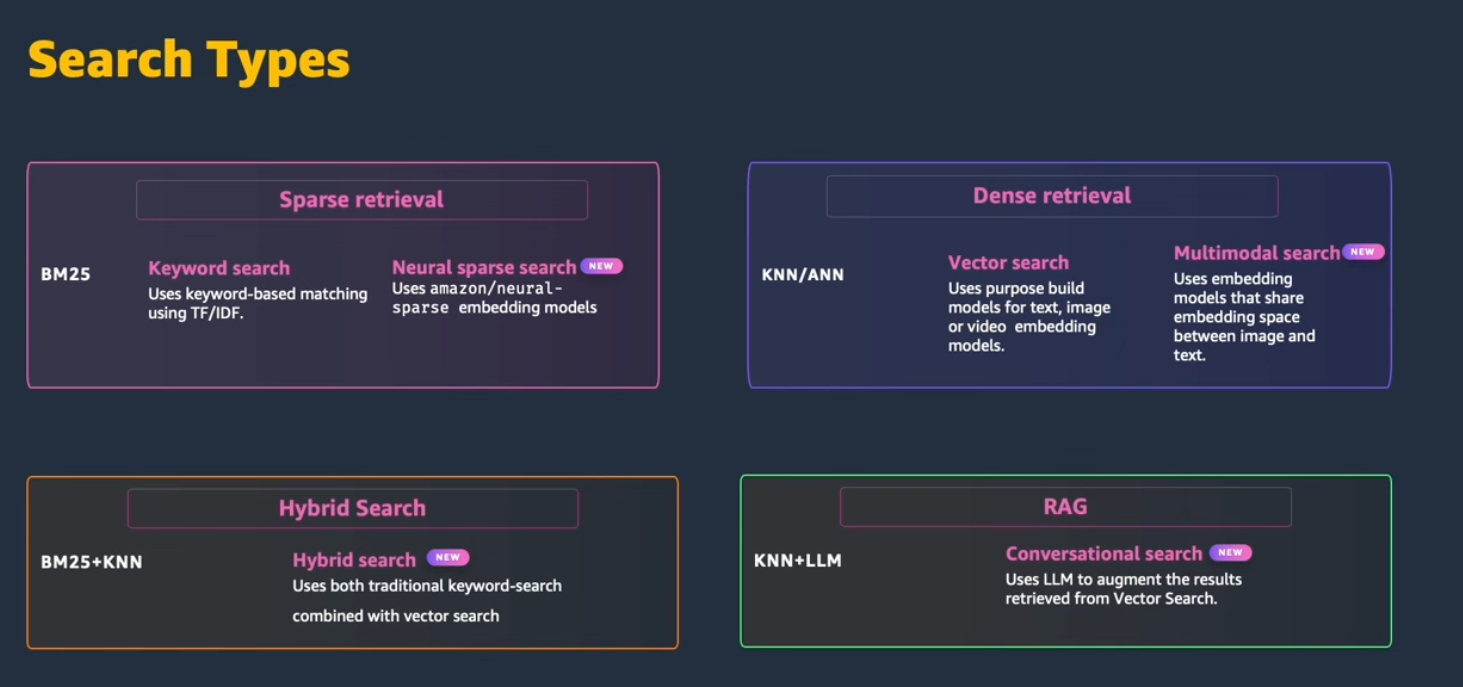

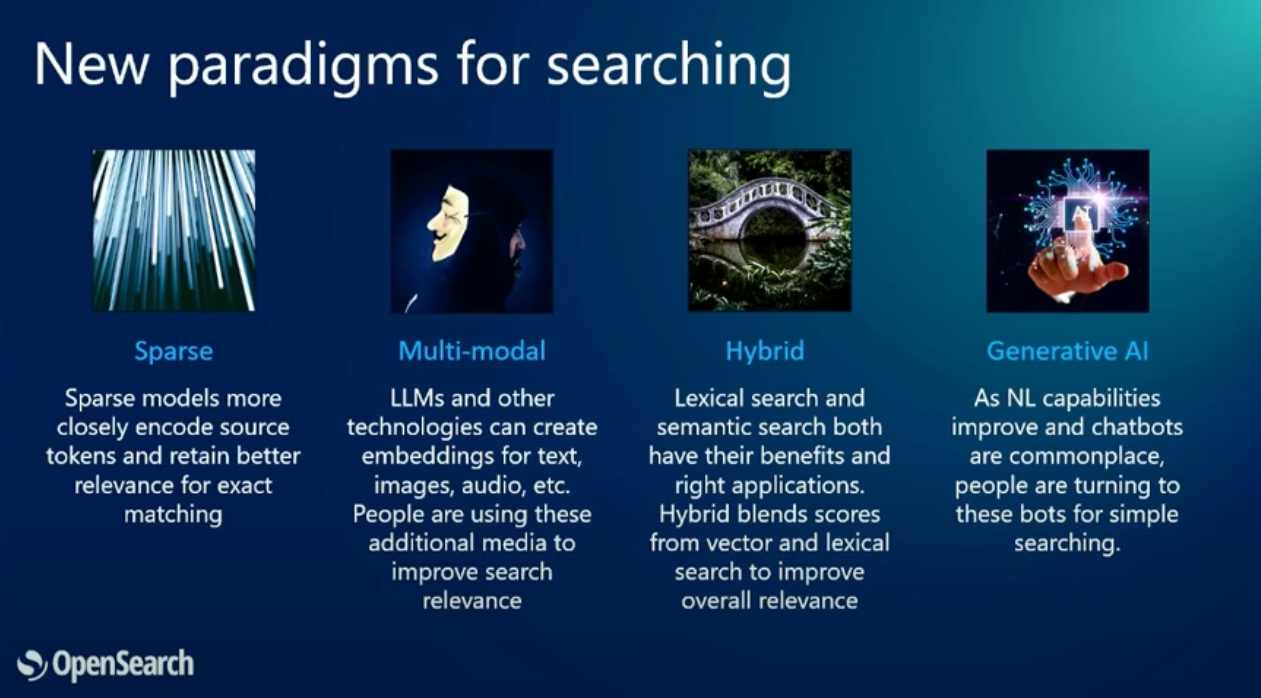

Search Types #

Search using Keyword search #

GET /my-nlp-index/_search

"_source":

"excludes": [

"passage_embedding"

]

,

"query":

"match":

"text":

"query": "wild west"

Search using a neural search #

GET /my-nlp-index/_search

"_source":

"excludes": [

"passage_embedding"

]

,

"query":

"neural":

"passage_embedding":

"query_text": "wild west",

"model_id": "aVeif4oB5Vm0Tdw8zYO2",

"k": 5

Search using a hybrid search #

GET /my-nlp-index/_search?search_pipeline=nlp-search-pipeline

"_source":

"exclude": [

"passage_embedding"

]

,

"query":

"hybrid":

"queries": [

"match":

"text":

"query": "cowboy rodeo bronco"

,

"neural":

"passage_embedding":

"query_text": "wild west",

"model_id": "aVeif4oB5Vm0Tdw8zYO2",

"k": 5

]

**