One Hot Encoding

tags :

- related

- Sparse Encoding, Dense Encoding, Dense Encoding

What is One Hot Encoding? #

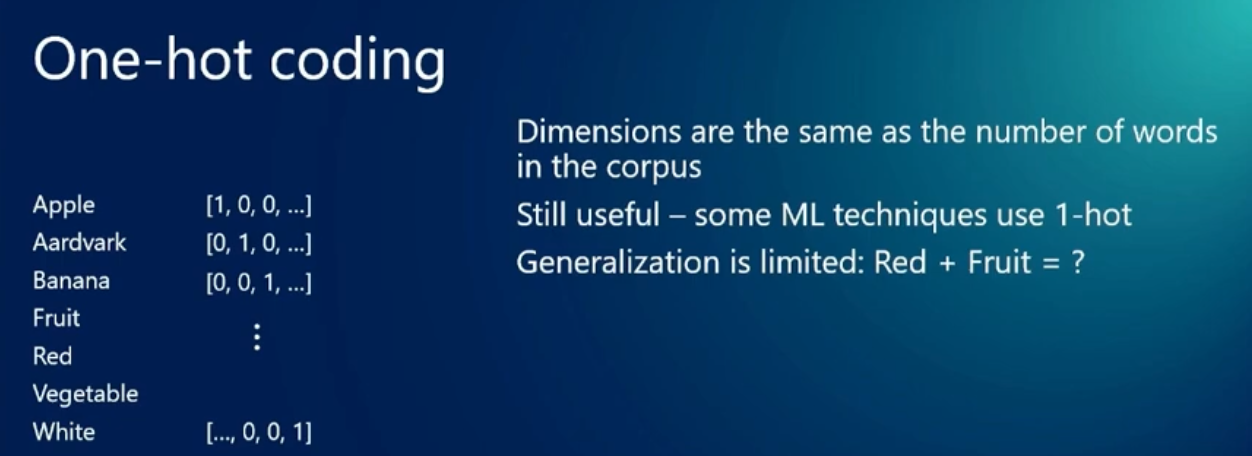

One hot encoding is a process of converting categorical data into a binary format. It is used to convert categorical data into a numerical format so that it can be used in machine learning algorithms. In one hot encoding, each category is represented as a binary vector where all elements are zero except for the element corresponding to the category which is set to one. This is done to avoid the ordinal relationship between categories.

Here’s a concise example to add to your note:

# Original categorical data

colors = ['red', 'blue', 'green']

# One-hot encoded representation

red = [1, 0, 0]

blue = [0, 1, 0]

green = [0, 0, 1]

This example clearly shows how each category gets transformed into a binary vector where only one position contains 1 (hence “one-hot”), while all others are 0. The position of the 1 uniquely identifies the original category.

ref, youtube

= Dimensions are the same as the number of categories or words in the corpus.

ref, youtube

= Dimensions are the same as the number of categories or words in the corpus.

One Hot Encoding vs Sparse Encoding #

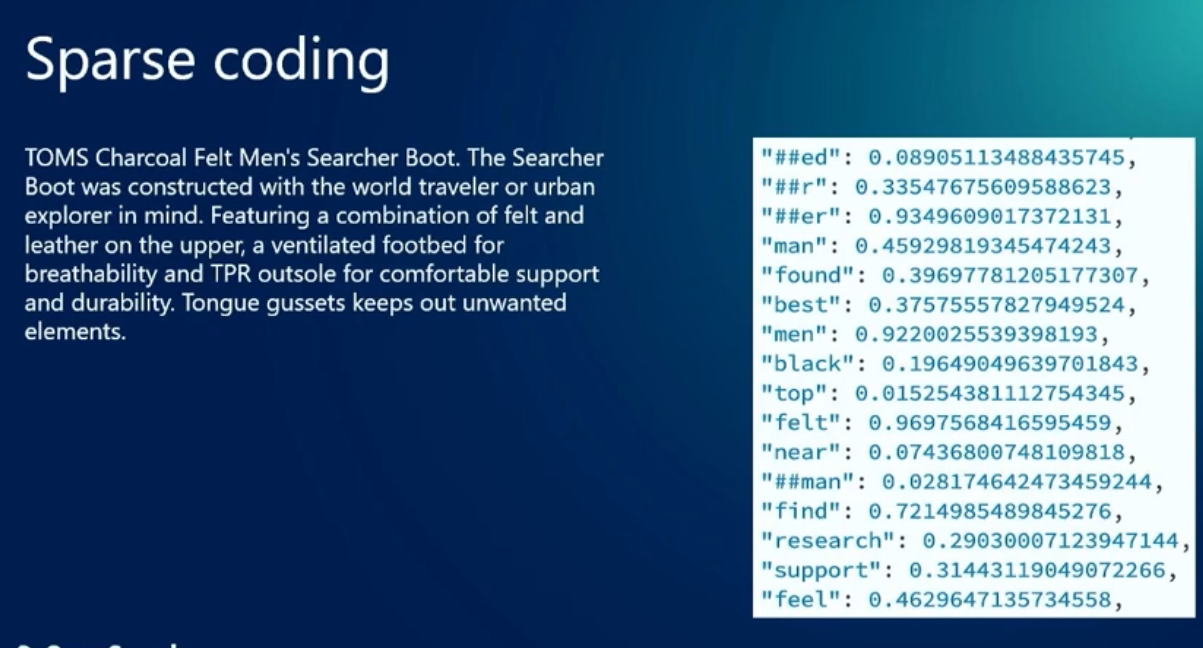

- Sparse encoding is a step better than one hot encoding.

Instead of using all the dimensions (words or categories) in the corpus, the sparse encoding uses only few and fixed dimensions. In another words instead of using 1 million dimensions for 1 million words in the corpus, it uses only 1000 dimensions. LLM generates this sparse vector of 1000 dimensions such that related concepts have higher values in some dimensions and unrelated concepts have zero values in those dimensions.

;; create a diagram to understand this better

Here’s a small ASCII diagram to illustrate the difference between One-Hot and Sparse encoding:

One-Hot Encoding (10,000 words):

word1: [1,0,0,0,0,0,0,...] (9,993 more zeros)

word2: [0,1,0,0,0,0,0,...] (9,993 more zeros)

(Very inefficient, mostly zeros)

Sparse Encoding (1,000 dimensions):

"cat": [0.8,0.1,0,0.3,...] (996 more values)

"dog": [0.7,0.2,0,0.4,...] (996 more values)

(More efficient, related words share similar patterns)

Key points:

- One-Hot: Single 1, rest zeros, dimension = vocabulary size

- Sparse: Multiple non-zero values(related concepts), fixed smaller dimension, captures relationships, many zeros values(unrelated concepts)