LCEL

tags :

- similar

- LangGraph

Acronym: LangChain Expression Language #

minimal example

from langchain_core.output_parsers import StrOutputParser

from langchain_core.prompts import ChatPromptTemplate

from langchain_openai import ChatOpenAI

prompt = ChatPromptTemplate.from_template("tell me a short joke about topic")

model = ChatOpenAI(model="gpt-4")

output_parser = StrOutputParser()

chain = prompt | model | output_parser

chain.invoke("topic": "ice cream")

“Why don’t ice creams ever get invited to parties?\n\nBecause they always drip when things heat up!”

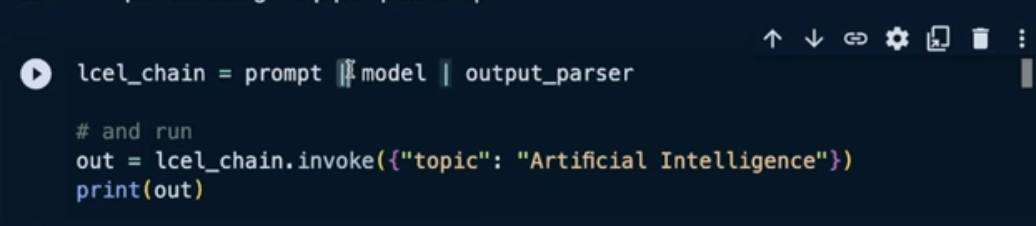

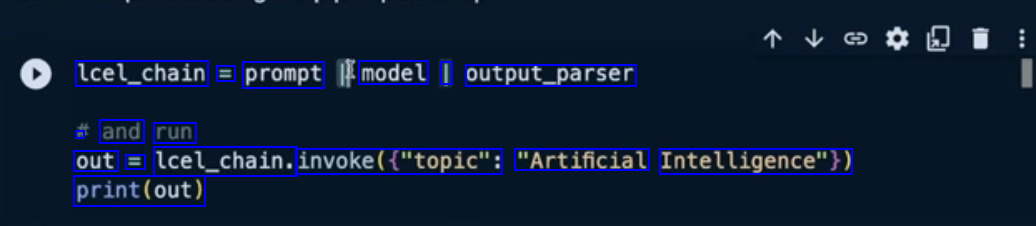

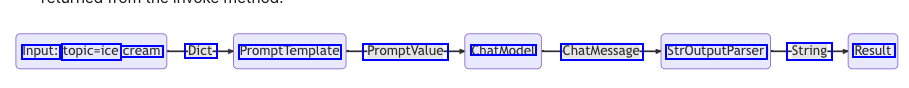

We pass in user input on the desired topic as “topic”: “ice cream”

- The prompt component takes the user input, which is then used to construct a PromptValue after using the topic to construct the prompt.

- The model component takes the generated prompt, and passes into the OpenAI LLM model for evaluation. The generated output from the model is a ChatMessage object.

- Finally, the output_parser component takes in a ChatMessage, and transforms this into a Python string, which is returned from the invoke method.

RAG #

# Requires:

# pip install langchain docarray tiktoken

from langchain_community.vectorstores import DocArrayInMemorySearch

from langchain_core.output_parsers import StrOutputParser

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.runnables import RunnableParallel, RunnablePassthrough

from langchain_openai.chat_models import ChatOpenAI

from langchain_openai.embeddings import OpenAIEmbeddings

vectorstore = DocArrayInMemorySearch.from_texts(

["harrison worked at kensho", "bears like to eat honey"],

embedding=OpenAIEmbeddings(),

)

retriever = vectorstore.as_retriever()

template = """Answer the question based only on the following context:

context

Question: question

"""

prompt = ChatPromptTemplate.from_template(template)

model = ChatOpenAI()

output_parser = StrOutputParser()

setup_and_retrieval = RunnableParallel(

"context": retriever, "question": RunnablePassthrough()

)

chain = setup_and_retrieval | prompt | model | output_parser

chain.invoke("where did harrison work?")

Understanding #

Demystifying LangChain Expression Language

Building blocks #

Interface #

To make it as easy as possible to create custom chains, we’ve implemented a “Runnable” protocol. The Runnable protocol is implemented for most components. This is a standard interface, which makes it easy to define custom chains as well as invoke them in a standard way. The standard interface includes:

Methods #

- stream: stream back chunks of the response

- invoke: call the chain on an input

- batch: call the chain on a list of inputs

Async Methods #

These also have corresponding async methods:

- astream: stream back chunks of the response async

- ainvoke: call the chain on an input async

- abatch: call the chain on a list of inputs async

- astream_log: stream back intermediate steps as they happen, in addition to the final response

- astream_events: beta stream events as they happen in the chain (introduced in langchain-core 0.1.14)

Input types and output types #

Runnable #

class Runnable:

def __init__(self, func):

self.func = func

def __or__(self, other):

def chained_func(*args, **kwargs):

# the other func consumes the result of this func

return other(self.func(*args, **kwargs))

return Runnable(chained_func)

def __call__(self, *args, **kwargs):

return self.func(*args, **kwargs)

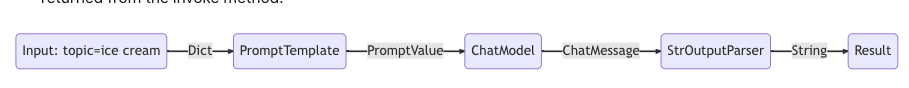

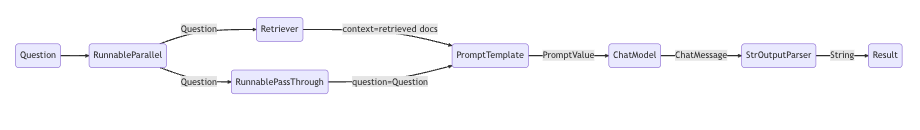

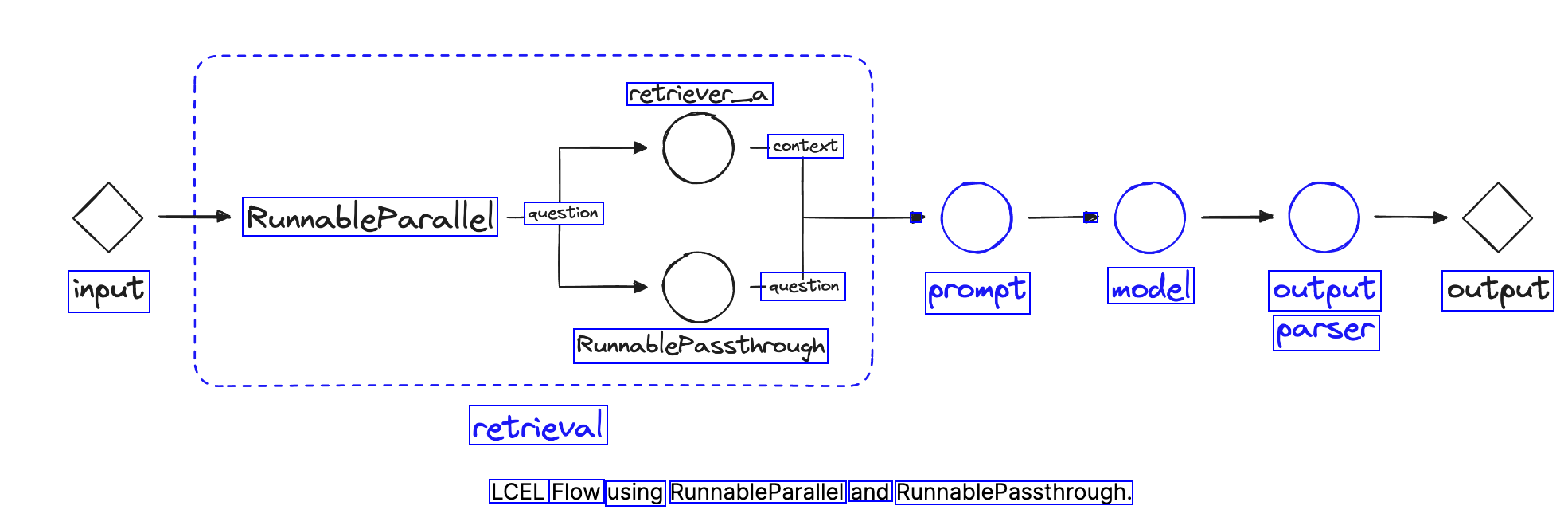

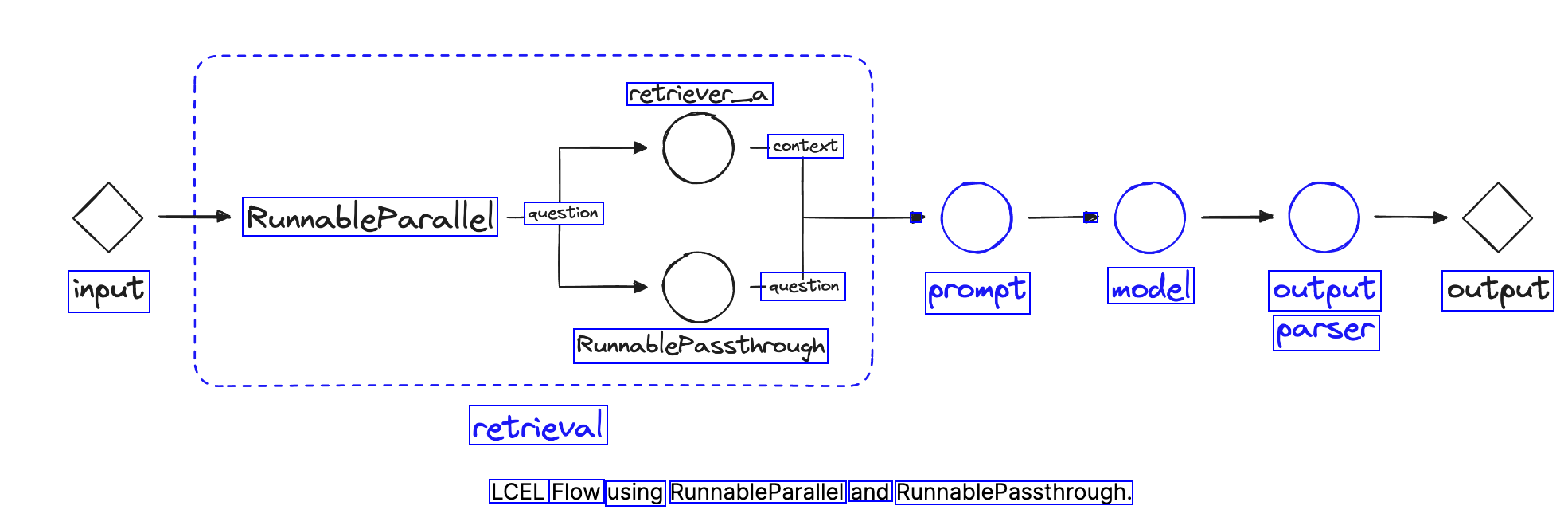

RunnableParallel #

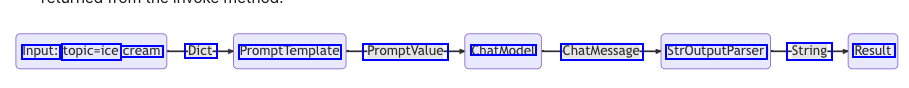

object allows us to define multiple values and operations, and run them all in parallel.

RunnablePassthrough #

The RunnablePassthrough object is used as a “passthrough” take takes any input to the current component (retrieval) and allows us to provide it in the component output via the “question” key.

retrieval = RunnableParallel(

"context": retriever_a, "question": RunnablePassthrough()

)

Using them

def add_five(x):

return x + 5

def multiply_by_two(x):

return x * 2

# wrap the functions with Runnable

add_five = Runnable(add_five)

multiply_by_two = Runnable(multiply_by_two)

# run them using the object approach

chain = add_five.__or__(multiply_by_two) # or add_five | multiply_by_two

chain(3) # should return 16

# output is 16

# chain the runnable functions together

chain = add_five | multiply_by_two

# invoke the chain

chain(3) # we should return 16

# output is 16

Why LCEL? #

Easier declarative syntax #

from typing import List

import openai

prompt_template = "Tell me a short joke about topic"

client = openai.OpenAI()

def call_chat_model(messages: List[dict]) -> str:

response = client.chat.completions.create(

model="gpt-3.5-turbo",

messages=messages,

)

return response.choices[0].message.content

def invoke_chain(topic: str) -> str:

prompt_value = prompt_template.format(topic=topic)

messages = ["role": "user", "content": prompt_value]

return call_chat_model(messages)

invoke_chain("ice cream")

###***** -----------> becomes **************

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

from langchain_core.runnables import RunnablePassthrough

prompt = ChatPromptTemplate.from_template(

"Tell me a short joke about topic"

)

output_parser = StrOutputParser()

model = ChatOpenAI(model="gpt-3.5-turbo")

chain = (

"topic": RunnablePassthrough()

| prompt

| model

| output_parser

)

chain.invoke("ice cream")

Understanding bit involved example #

from langchain_core.runnables import (

RunnableParallel,

RunnablePassthrough

)

retriever_a = vecstore_a.as_retriever()

retriever_b = vecstore_b.as_retriever()

prompt_str = """Answer the question below using the context:

Context: context

Question: question

Answer: """

prompt = ChatPromptTemplate.from_template(prompt_str)

retrieval = RunnableParallel(

"context": retriever_a, "question": RunnablePassthrough()

)

chain = retrieval | prompt | model | output_parser

OCR of Images #

2024-02-18_11-46-40_screenshot.png #

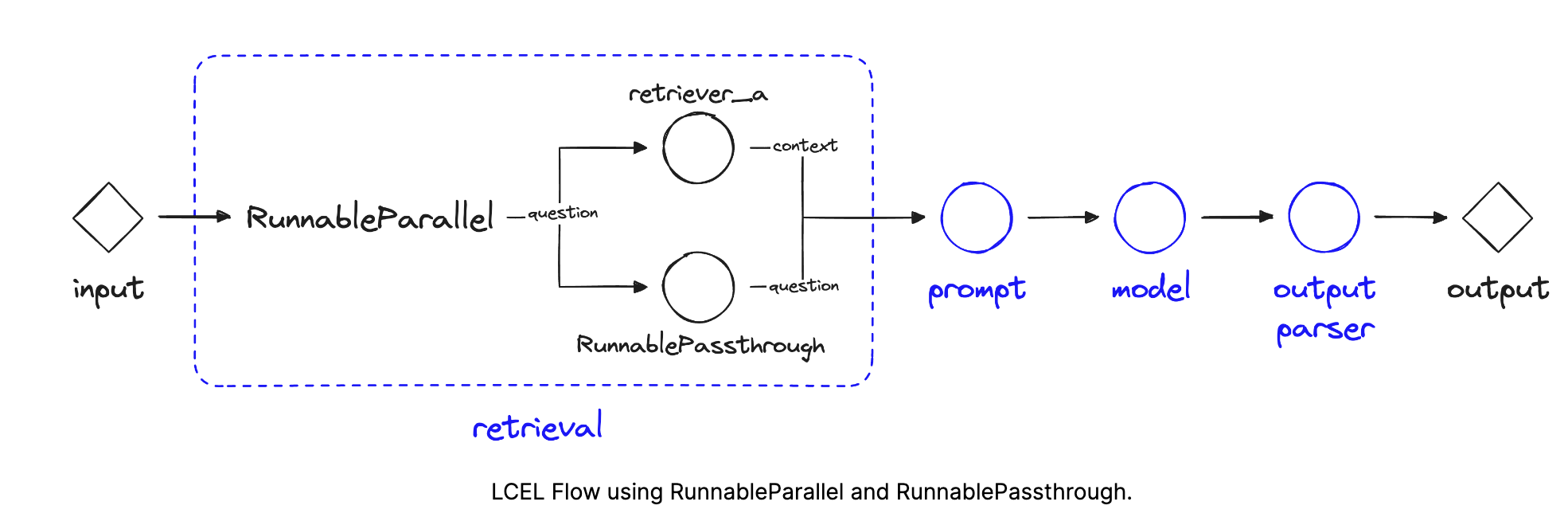

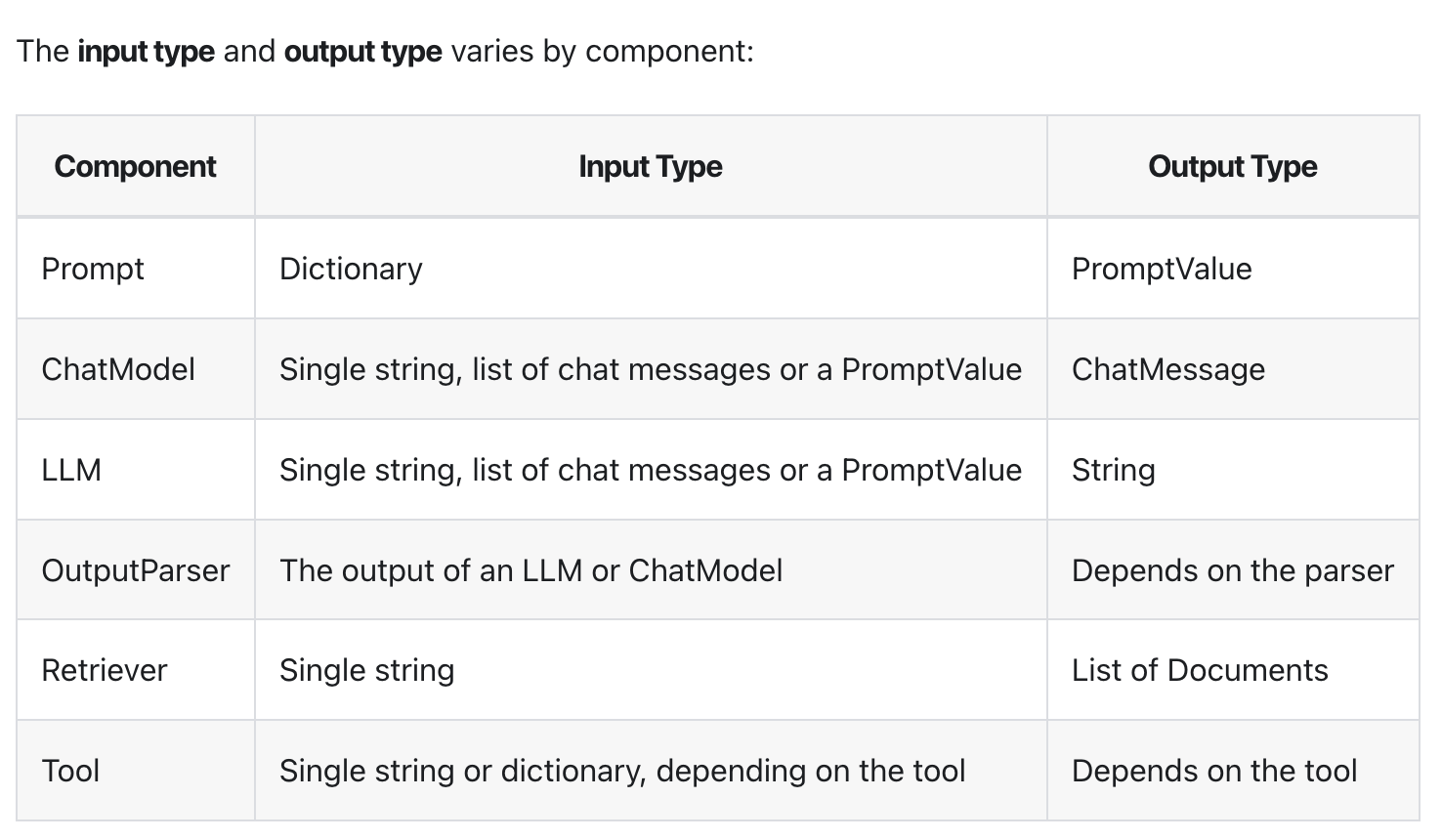

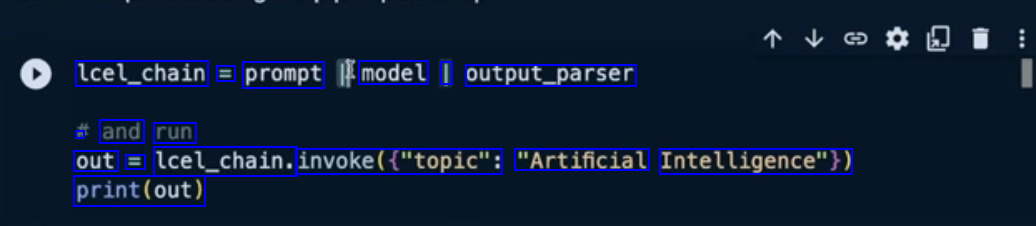

lcel_chain = prompt model I output_parser . and run out E lcel_chain. invoke(("topic": "Artificial Intelligence")) print(out)

2024-02-18_11-49-28_screenshot.png #

Input: topic-ice cream Dict- PromptTemplate PromptValue- ChatModel ChatMessage- StrOutputParser String- Result

2024-02-18_11-50-36_screenshot.png #

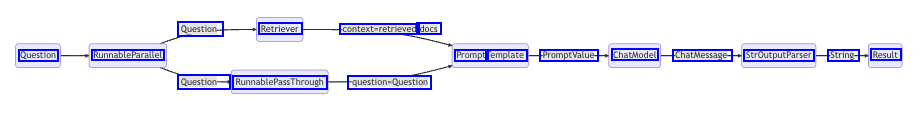

Question- Retriever context-retrieved docs Question RunnableParallel Prompt Template PromptValue- ChatModel ChatMessage- StrOutputParser String- Result Question- RunnablePassThrough question-Question

2024-03-11_13-02-32_screenshot.png #

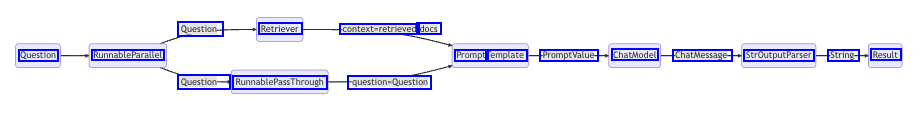

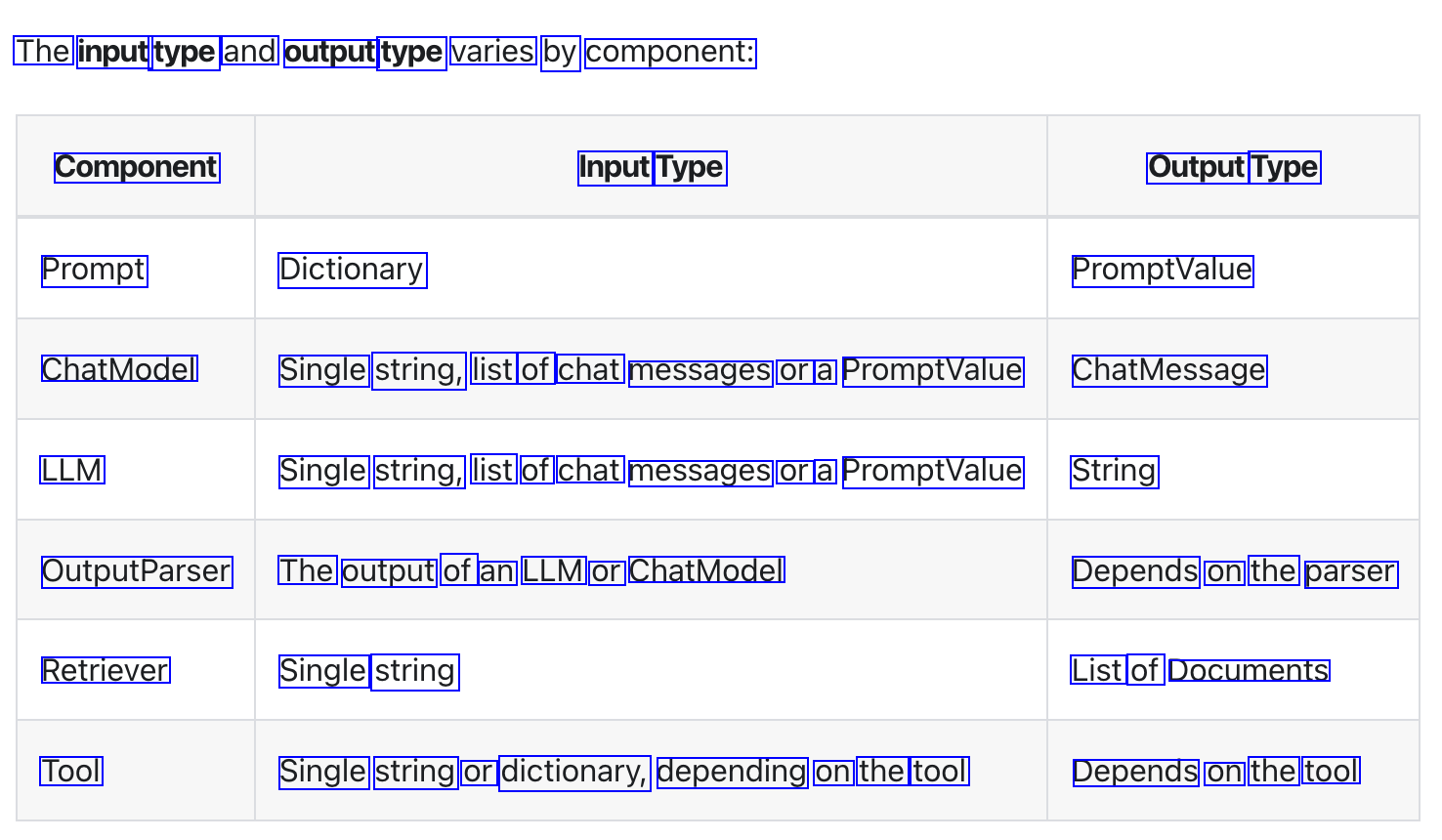

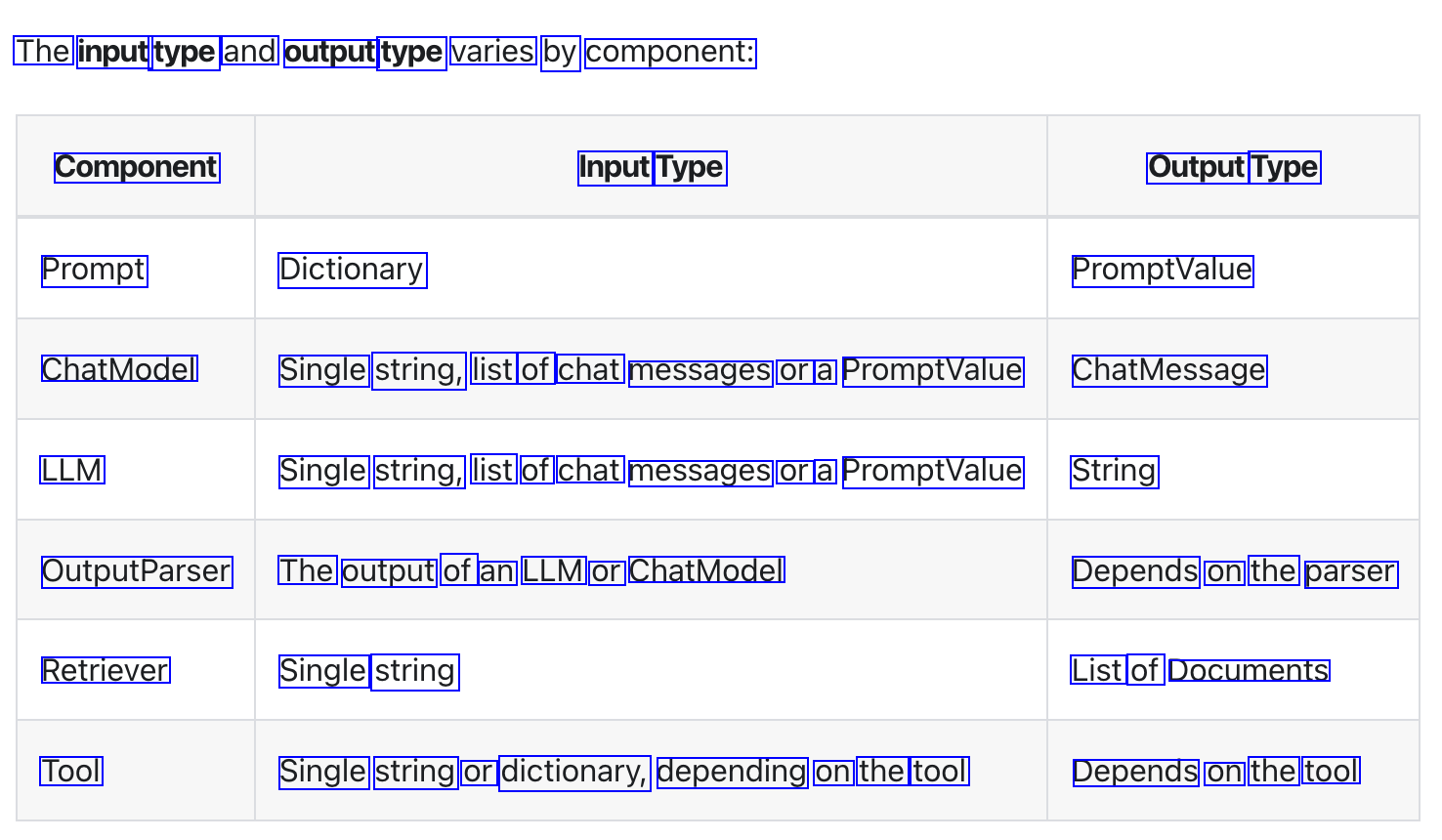

The input type and output type varies by component: Component Input Type Output Type Prompt Dictionary PromptValue ChatModel Single string, list of chat messages or a PromptValue ChatMessage LLM Single string, list of chat messages or a PromptValue String OutputParser The output of an LLM or ChatModel Depends on the parser Retriever Single string List of Documents Tool Single string or dictionary, depending on the tool Depends on the tool

2024-03-11_12-57-45_screenshot.png #

retriever_a context RumableParallel question - input question prompt model output output parser RumnablePassthrough retrieval LCEL Flow using RunnableParallel and RunnablePassthrough.

OCR of Images #

2024-02-18_11-46-40_screenshot.png #

lcel_chain = prompt model I output_parser . and run out E lcel_chain. invoke(("topic": "Artificial Intelligence")) print(out)

2024-02-18_11-49-28_screenshot.png #

Input: topic-ice cream Dict- PromptTemplate PromptValue- ChatModel ChatMessage- StrOutputParser String- Result

2024-02-18_11-50-36_screenshot.png #

Question- Retriever context-retrieved docs Question RunnableParallel Prompt Template PromptValue- ChatModel ChatMessage- StrOutputParser String- Result Question- RunnablePassThrough question-Question

2024-03-11_13-02-32_screenshot.png #

The input type and output type varies by component: Component Input Type Output Type Prompt Dictionary PromptValue ChatModel Single string, list of chat messages or a PromptValue ChatMessage LLM Single string, list of chat messages or a PromptValue String OutputParser The output of an LLM or ChatModel Depends on the parser Retriever Single string List of Documents Tool Single string or dictionary, depending on the tool Depends on the tool

2024-03-11_12-57-45_screenshot.png #

retriever_a context RumableParallel question - input question prompt model output output parser RumnablePassthrough retrieval LCEL Flow using RunnableParallel and RunnablePassthrough.