Dense Vector

- tags

- ML, LLM, Semantic Search

Summary #

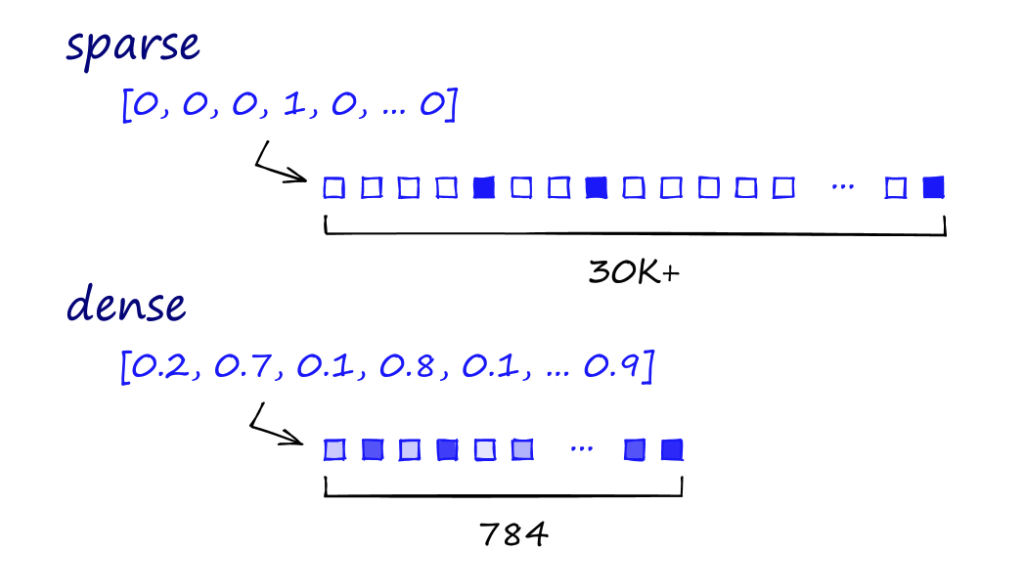

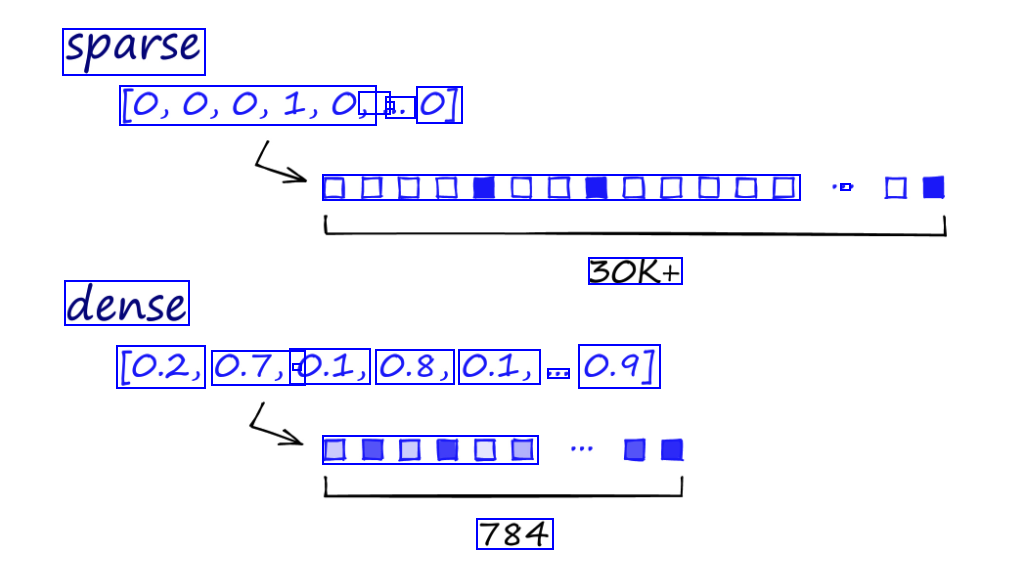

- Sparse retrieval methods have some advantages like explainability, good fit for usage with classic algorithms like BM25, etc. But sparse representation of text based on tokens doesn’t reflect Semantic of each term fully in a context of the whole text. This problem can’t be completely solved by just weighting importance of each term in a text or by document expansion.

From another point of view a lot of methods to obtain contextual Embedding of tokens or whole text are available now. These methods allow to represent a text in a form of vector in some vector space with predefined dimension.

Dimension of vectors does not depend on length of a text. This kind of vectors are usually referred as dense vectors.

Semantically close texts are usually represented by vectors that are close in a vector space. Family of methods based on dense vectors is referred as dense retrieval.

Dense Vector vs Sparse Vector #

OCR of Images #

2024-02-27_12-21-13_screenshot.png #

sparse [0,0,0,1,0, . - . O] DODDOD - 30K+ dense [0.2, 0.7, 0.1, - 0.8, 0.1, 0.9] EDUDO 784