BERT

tags :

Acronym #

Bi-directional Endcoder Representation from Transformers

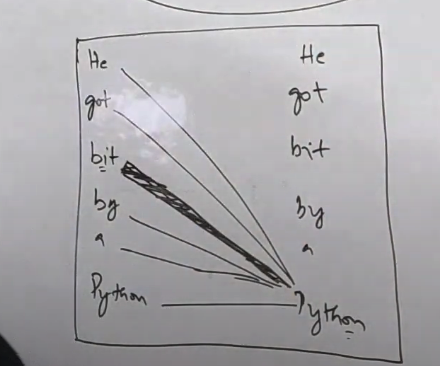

BERT considers the word “Python” in two senses as different considering the context.

It compares the word with every other word in the sentence to get the context info.

It compares the word with every other word in the sentence to get the context info.

BERT vs Word2vec #

Word2Vec models generate embeddings that are context-independent: ie - there is just one vector (numeric) representation for each word. Different senses of the word (if any) are combined into one single vector.

However, the BERT model generates embeddings that allow us to have multiple (more than one) vector (numeric) representations for the same word, based on the context in which the word is used. Thus, BERT embeddings are context-dependent.

OCR of Images #

2023-12-19_15-18-56_screenshot.png #

2 divcetional End coder Representation From Transtormers A: He at bit by A Pygthen - B: yth - 1 m A pro - - T A mminy lanquage

2023-12-19_15-19-45_screenshot.png #

He He got got bit bit ba by A Bytim - Pythomn